Faceted navigation best (and 5 of the worst) practices

Webmaster Level: Advanced

Faceted navigation, such as filtering by color or price range, can be helpful for your visitors, but it’s often not search-friendly since it creates many combinations of URLs with duplicative content. With duplicative URLs, search engines may not crawl new or updated unique content as quickly, and/or they may not index a page accurately because indexing signals are diluted between the duplicate versions. To reduce these issues and help faceted navigation sites become as search-friendly as possible, we’d like to:

- Provide background and potential issues with faceted navigation

- Highlight worst practices

- Share best practices

Selecting filters with faceted navigation can cause many URL combinations, such as

http://www.example.com/category.php?category=gummy-candies&price=5-10&price=over-10Background

In an ideal state, unique content — whether an individual product/article or a category of products/articles — would have only one accessible URL. This URL would have a clear click path, or route to the content from within the site, accessible by clicking from the homepage or a category page.

Ideal for searchers and Google Search

- Clear path that reaches all individual product/article pages

- One representative URL for category page

http://www.example.com/category.php?category=gummy-candies- One representative URL for individual product page

http://www.example.com/product.php?item=swedish-fishUndesirable duplication caused with faceted navigation

- Numerous URLs for the same article/product

- Numerous category pages that provide little or no value to searchers and search engines)

URL example.com/category.php? category=gummy-candies&taste=sour&price=5-10example.com/category.php? category=gummy-candies&taste=sour&price=over-10Issues

- No added value to Google searchers given users rarely search for [sour gummy candy price five to ten dollars].

- No added value for search engine crawlers that discover same item (“fruit salad”) from parent category pages (either “gummy candies” or “sour gummy candies”).

- Negative value to site owner who may have indexing signals diluted between numerous versions of the same category.

- Negative value to site owner with respect to serving bandwidth and losing crawler capacity to duplicative content rather than new or updated pages.

- No value for search engines (should have 404 response code).

- Negative value to searchers.

Worst (search un-friendly) practices for faceted navigation

Worst practice #1: Non-standard URL encoding for parameters, like commas or brackets, instead of “key=value&” pairs.

Worst practices:

example.com/category?[category:gummy-candy][sort:price-low-to-high][sid:789]

- key=value pairs marked with : rather than =

- multiple parameters appended with [ ] rather than &

example.com/category?category,gummy-candy,,sort,lowtohigh,,sid,789

- key=value pairs marked with a , rather than =

- multiple parameters appended with ,, rather than &

Best practice:

example.com/category?category=gummy-candy&sort=low-to-high&sid=789While humans may be able to decode odd URL parameters, such as “,,”, crawlers have difficulty interpreting URL parameters when they’re implemented in a non-standard fashion. Software engineer on Google’s Crawling Team, Mehmet Aktuna, says “Using non-standard encoding is just asking for trouble.” Instead, connect key=value pairs with an equal sign (=) and append multiple parameters with an ampersand (&).

Worst practice #2: Using directories or file paths rather than parameters to list values that don’t change page content.

Worst practice:

example.com/c123/s789/product?swedish-fish

(where /c123/ is a category, /s789/ is a sessionID that doesn’t change page content)Good practice:

example.com/gummy-candy/product?item=swedish-fish&sid=789(the directory, /gummy-candy/,changes the page content in a meaningful way)Best practice:

example.com/product?item=swedish-fish&category=gummy-candy&sid=789(URL parameters allow more flexibility for search engines to determine how to crawl efficiently)It’s difficult for automated programs, like search engine crawlers, to differentiate useful values (e.g., “gummy-candy”) from the useless ones (e.g., “sessionID”) when values are placed directly in the path. On the other hand, URL parameters provide flexibility for search engines to quickly test and determine when a given value doesn’t require the crawler access all variations.

Common values that don’t change page content and should be listed as URL parameters include:

- Session IDs

- Tracking IDs

- Referrer IDs

- Timestamp

Worst practice #3: Converting user-generated values into (possibly infinite) URL parameters that are crawlable and indexable, but not useful in search results.

Worst practices (e.g., user-generated values like longitude/latitude or “days ago” as crawlable and indexable URLs):

example.com/find-a-doctor?radius=15&latitude=40.7565068&longitude=-73.9668408

example.com/article?category=health&days-ago=7Best practices:

example.com/find-a-doctor?city=san-francisco&neighborhood=soma

example.com/articles?category=health&date=january-10-2014Rather than allow user-generated values to create crawlable URLs — which leads to infinite possibilities with very little value to searchers — perhaps publish category pages for the most popular values, then include additional information so the page provides more value than an ordinary search results page. Alternatively, consider placing user-generated values in a separate directory and then robots.txt disallow crawling of that directory.

example.com/filtering/find-a-doctor?radius=15&latitude=40.7565068&longitude=-73.9668408example.com/filtering/articles?category=health&days-ago=7with robots.txt:

User-agent: *

Disallow: /filtering/Worst practice #4: Appending URL parameters without logic.

Worst practices:

example.com/gummy-candy/lollipops/gummy-candy/gummy-candy/product?swedish-fishexample.com/product?cat=gummy-candy&cat=lollipops&cat=gummy-candy&cat=gummy-candy&item=swedish-fishBetter practice:

example.com/gummy-candy/product?item=swedish-fishBest practice:

example.com/product?item=swedish-fish&category=gummy-candyExtraneous URL parameters only increase duplication, causing less efficient crawling and indexing. Therefore, consider stripping unnecessary URL parameters and performing your site’s “internal housekeeping” before generating the URL. If many parameters are required for the user session, perhaps hide the information in a cookie rather than continually append values like

cat=gummy-candy&cat=lollipops&cat=gummy-candy&…Worst practice #5: Offering further refinement (filtering) when there are zero results.

Worst practice:

Allowing users to select filters when zero items exist for the refinement.

Refinement to a page with zero results (e.g.,price=over-10) is allowed even though it frustrates users and causes unnecessary issues for search engines.Best practice

Only create links/URLs when it’s a valid user-selection (items exist). With zero items, grey out filtering options. To further improve usability, consider adding item counts next to each filter.

Prevent useless URLs and minimize the crawl space by only creating URLs when products exist. This helps users to stay engaged on your site (fewer clicks on the back button when no products exist), and helps minimize potential URLs known to crawlers. Furthermore, if a page isn’t just temporarily out-of-stock, but is unlikely to ever contain useful content, consider returning a 404 status code. With the 404 response, you can include a helpful message to users with more navigation options or a search box to find related products.

Best practices for new faceted navigation implementations or redesigns

New sites that are considering implementing faceted navigation have several options to optimize the “crawl space” (the totality of URLs on your site known to Googlebot) for unique content pages, reduce crawling of duplicative pages, and consolidate indexing signals.

- Determine which URL parameters are required for search engines to crawl every individual content page (i.e., determine what parameters are required to create at least one click-path to each item). Required parameters may include

item-id,category-id,page, etc.- Determine which parameters would be valuable to searchers and their queries, and which would likely only cause duplication with unnecessary crawling or indexing. In the candy store example, I may find the URL parameter “

taste” to be valuable to searchers for queries like [sour gummy candies] which could show the resultexample.com/category.php?category=gummy-candies&taste=sour. However, I may consider the parameter “price” to only cause duplication, such ascategory=gummy-candies&taste=sour&price=over-10. Other common examples:

- Valuable parameters to searchers:

item-id,category-id,name,brand…- Unnecessary parameters:

session-id,price-range…- Consider implementing one of several configuration options for URLs that contain unnecessary parameters. Just make sure that the unnecessary URL parameters are never required in a crawler or user’s click path to reach each individual product!

- Option 1: rel=”nofollow” internal links

Make all unnecessary URLs links rel=“nofollow.” This option minimizes the crawler’s discovery of unnecessary URLs and therefore reduces the potentially explosive crawl space (URLs known to the crawler) that can occur with faceted navigation. rel=”nofollow” doesn’t prevent the unnecessary URLs from being crawled (only a robots.txt disallow prevents crawling). By allowing them to be crawled, however, you can consolidate indexing signals from the unnecessary URLs with a searcher-valuable URL by adding rel=”canonical” from the unnecessary URL to a superset URL (e.g.

example.com/category.php?category=gummy-candies&taste=sour&price=5-10can specify arel=”canonical”to the superset sour gummy candies view-all page atexample.com/category.php?category=gummy-candies&taste=sour&page=all).- Option 2: Robots.txt disallow

For URLs with unnecessary parameters, include a

/filtering/directory that will be robots.txt disallow’d. This lets all search engines freely crawl good content, but will prevent crawling of the unwanted URLs. For instance, if my valuable parameters were item, category, and taste, and my unnecessary parameters were session-id and price. I may have the URL:example.com/category.php?category=gummy-candies

which could link to another URL valuable parameter such as taste:example.com/category.php?category=gummy-candies&taste=sour.

but for the unnecessary parameters, such as price, the URL includes a predefined directory,/filtering/:example.com/filtering/category.php?category=gummy-candies&price=5-10

which is then robots.txt disallowed

User-agent: *

Disallow: /filtering/- Option 3: Separate hosts

If you’re not using a CDN (sites using CDNs don’t have this flexibility easily available in Webmaster Tools), consider placing any URLs with unnecessary parameters on a separate host — for example, creating main host

www.example.comand secondary host,www2.example.com. On the secondary host (www2), set the Crawl rate in Webmaster Tools to “low” while keeping the main host’s crawl rate as high as possible. This would allow for more full crawling of the main host URLs and reduces Googlebot’s focus on your unnecessary URLs.

- Be sure there remains at least one click path to all items on the main host.

- If you’d like to consolidate indexing signals, consider adding rel=”canonical” from the secondary host to a superset URL on the main host (e.g.

www2.example.com/category.php?category=gummy-candies&taste=sour&price=5-10may specify a rel=”canonical” to the superset “sour gummy candies” view-all page,www.example.com/category.php?category=gummy-candies&taste=sour&page=all).

- Prevent clickable links when no products exist for the category/filter.

- Add logic to the display of URL parameters.

- Remove unnecessary parameters rather than continuously append values.

- Avoid

example.com/product?cat=gummy-candy&cat=lollipops&cat=gummy-candy&item=swedish-fish)- Help the searcher experience by keeping a consistent parameter order based on searcher-valuable parameters listed first (as the URL may be visible in search results) and searcher-irrelevant parameters last (e.g., session ID).

- Avoid

example.com/category.php?session-id=123&tracking-id=456&category=gummy-candies&taste=sour- Improve indexing of individual content pages with rel=”canonical” to the preferred version of a page. rel=”canonical” can be used across hostnames or domains.

- Improve indexing of paginated content (such as page=1 and page=2 of the category “gummy candies”) by either:

- Adding rel=”canonical” from individual component pages in the series to the category’s “view-all” page (e.g. page=1, page=2, and page=3 of “gummy candies” with rel=”canonical” to

category=gummy-candies&page=all) while making sure that it’s still a good searcher experience (e.g., the page loads quickly).- Using pagination markup with rel=”next” and rel=”prev” to consolidate indexing properties, such as links, from the component pages/URLs to the series as a whole.

- Be sure that if using JavaScript to dynamically sort/filter/hide content without updating the URL, there still exists URLs on your site that searchers would find valuable, such as main category and product pages that can be crawled and indexed. For instance, avoid using only the homepage (i.e., one URL) for your entire site with JavaScript to dynamically change content with user navigation — this would unfortunately provide searchers with only one URL to reach all of your content. Also, check that performance isn’t negatively affected with dynamic filtering, as this could undermine the user experience.

- Include only canonical URLs in Sitemaps.

Best practices for existing sites with faceted navigation

First, know that the best practices listed above (e.g., rel=”nofollow” for unnecessary URLs) still apply if/when you’re able to implement a larger redesign. Otherwise, with existing faceted navigation, it’s likely that a large crawl space was already discovered by search engines. Therefore, focus on reducing further growth of unnecessary pages crawled by Googlebot and consolidating indexing signals.

- Use parameters (when possible) with standard encoding and key=value pairs.

- Verify that values that don’t change page content, such as session IDs, are implemented as standard key=value pairs, not directories

- Prevent clickable anchors when products exist for the category/filter (i.e., don’t allow clicks or URLs to be created when no items exist for the filter)

- Add logic to the display of URL parameters

- Remove unnecessary parameters rather than continuously append values (e.g., avoid

example.com/product?cat=gummy-candy&cat=lollipops&cat=gummy-candy&item=swedish-fish)- Help the searcher experience by keeping a consistent parameter order based on searcher-valuable parameters listed first (as the URL may be visible in search results) and searcher-irrelevant parameters last (e.g., avoid

example.com/category?session-id=123&tracking-id=456&category=gummy-candies&taste=sour&in favor ofexample.com/category.php?category=gummy-candies&taste=sour&session-id=123&tracking-id=456)- Configure Webmaster Tools URL Parameters if you have strong understanding of the URL parameter behavior on your site (make sure that there is still a clear click path to each individual item/article). For instance, with URL Parameters in Webmaster Tools, you can list the parameter name, the parameters effect on the page content, and how you’d like Googlebot to crawl URLs containing the parameter.

- Be sure that if using JavaScript to dynamically sort/filter/hide content without updating the URL, there still exists URLs on your site that searchers would find valuable, such as main category and product pages that can be crawled and indexed. For instance, avoid using only the homepage (i.e., one URL) for your entire site with JavaScript to dynamically change content with user navigation — this would unfortunately provide searchers with only one URL to reach all of your content. Also, check that performance isn’t negatively affected with dynamic filtering, as this could undermine the user experience.

- Improve indexing of individual content pages with rel=”canonical” to the preferred version of a page. rel=”canonical” can be used across hostnames or domains.

- Improve indexing of paginated content (such as page=1 and page=2 of the category “gummy candies”) by either:

- Adding rel=”canonical” from individual component pages in the series to the category’s “view-all” page (e.g. page=1, page=2, and page=3 of “gummy candies” with rel=”canonical” to

category=gummy-candies&page=all) while making sure that it’s still a good searcher experience (e.g., the page loads quickly).- Using pagination markup with rel=”next” and rel=”prev” to consolidate indexing properties, such as links, from the component pages/URLs to the series as a whole.

- Include only canonical URLs in Sitemaps.

Remember that commonly, the simpler you can keep it, the better. Questions? Please ask in our Webmaster discussion forum.

Written by Maile Ohye, Developer Programs Tech Lead, and Mehmet Aktuna, Crawl Team

Affiliate programs and added value

Our quality guidelines warn against running a site with thin or scraped content without adding substantial added value to the user. Recently, we’ve seen this behavior on many video sites, particularly in the adult industry, but also elsewhere. These sites display content provided by an affiliate program—the same content that is available across hundreds or even thousands of other sites.

If your site syndicates content that’s available elsewhere, a good question to ask is: “Does this site provide significant added benefits that would make a user want to visit this site in search results instead of the original source of the content?” If the answer is “No,” the site may frustrate searchers and violate our quality guidelines. As with any violation of our quality guidelines, we may take action, including removal from our index, in order to maintain the quality of our users’ search results. If you have any questions about our guidelines, you can ask them in our Webmaster Help Forum.

Posted by Chris Nelson, Search Quality Team

A new Googlebot user-agent for crawling smartphone content

Webmaster level: Advanced

Over the years, Google has used different crawlers to crawl and index content for feature phones and smartphones. These mobile-specific crawlers have all been referred to as Googlebot-Mobile. However, feature phones and smartphones have considerably different device capabilities, and we’ve seen cases where a webmaster inadvertently blocked smartphone crawling or indexing when they really meant to block just feature phone crawling or indexing. This ambiguity made it impossible for Google to index smartphone content of some sites, or for Google to recognize that these sites are smartphone-optimized.

A new Googlebot for smartphones

To clarify the situation and to give webmasters greater control, we’ll be retiring “Googlebot-Mobile” for smartphones as a user agent starting in 3-4 weeks’ time. From then on, the user-agent for smartphones will identify itself simply as “Googlebot” but will still list “mobile” elsewhere in the user-agent string. Here are the new and old user-agents:

The new Googlebot for smartphones user-agent:Mozilla/5.0 (iPhone; CPU iPhone OS 6_0 like Mac OS X) AppleWebKit/536.26 (KHTML, like Gecko) Version/6.0 Mobile/10A5376e Safari/8536.25 (compatible; Googlebot/2.1; +http://www.google.com/bot.html)

The Googlebot-Mobile for smartphones user-agent we will be retiring soon:Mozilla/5.0 (iPhone; CPU iPhone OS 6_0 like Mac OS X) AppleWebKit/536.26 (KHTML, like Gecko) Version/6.0 Mobile/10A5376e Safari/8536.25 (compatible; Googlebot-Mobile/2.1; +http://www.google.com/bot.html)

This change affects only Googlebot-Mobile for smartphones. The user-agent of the regular Googlebot does not change, and the remaining two Googlebot-Mobile crawlers will continue to refer to feature phone devices in their user-agent strings; for reference, these are:

Regular Googlebot user-agent:Mozilla/5.0 (compatible; Googlebot/2.1; +http://www.google.com/bot.html)

The two Googlebot-Mobile user-agents for feature phones:

SAMSUNG-SGH-E250/1.0 Profile/MIDP-2.0 Configuration/CLDC-1.1 UP.Browser/6.2.3.3.c.1.101 (GUI) MMP/2.0 (compatible; Googlebot-Mobile/2.1; +http://www.google.com/bot.html)DoCoMo/2.0 N905i(c100;TB;W24H16) (compatible; Googlebot-Mobile/2.1; +http://www.google.com/bot.html)

You can test your site using the Fetch as Google feature in Webmaster Tools, and you can see a full list of our existing crawlers in the Help Center.

Crawling and indexing

Please note this important implication of the user-agent update: The new Googlebot for smartphones crawler will follow robots.txt, robots meta tag, and HTTP header directives for Googlebot instead of Googlebot-Mobile. For example, when the new crawler is deployed, this robots.txt directive will block all crawling by the new Googlebot for smartphones user-agent, and also the regular Googlebot:

User-agent: Googlebot

Disallow: /

This robots.txt directive will block crawling by Google’s feature phone crawlers:

User-agent: Googlebot-Mobile

Disallow: /

Based on our internal analyses, this update affects less than 0.001% of URLs while giving webmasters greater control over the crawling and indexing of their content. As always, if you have any questions, you can:

- Read our recommendations for building smartphone-optimized sites

- Learn more about controlling Googlebot crawling and indexing

- Ask in our Webmaster help forums or visit one of our Webmaster Central office hours hangouts.

Posted by Zhijian He, Smartphone search engineer

Changes in crawl error reporting for redirects

Webmaster level: intermediate-advancedIn the past, we have seen occasional confusion by webmasters regarding how crawl errors on redirecting pages were shown in Webmaster Tools. It’s time to make this a bit clearer and easier to diagnose! While it used…

Google Publisher Plugin beta: Bringing our publisher products to WordPress

We’ve heard from many publishers using WordPress that they’re looking for an easier way to work with Google products within the platform. Today, we’re excited to share the beta release of our official Google Publisher Plugin, which adds new functionality to publishers’ WordPress websites. If you own your own domain and power it with WordPress, this new plugin will give you access to a few Google services — and all within WordPress.

Please keep in mind that because this is a beta release, we’re still fine-tuning the plugin to make sure it works well on the many WordPress sites out there. We’d love for you to try it now and share your feedback on how it works for your site.

This first version of the Google Publisher Plugin currently supports two Google products:

- Google AdSense: Earn money by placing ads on your website. The plugin links your WordPress site to your AdSense account and makes it easier to place ads on your site — without needing to manually modify any HTML code.

- Google Webmaster Tools: Webmaster Tools provides you with detailed reports about your pages’ visibility on Google. The plugin allows you to verify your site on Webmaster Tools with just one click.

Visit the WordPress.org plugin directory to download the new plugin and give it a try. For more information about the plugin and how to use it, please visit our Help Center. We look forward to hearing your feedback!

Posted by Michael Smith – Product Manager

More detailed search queries in Webmaster Tools

Webmaster level: intermediateTo help jump-start your year and make metrics for your site more actionable, we’ve updated one of the most popular features in Webmaster Tools: data in the search queries feature will no longer be rounded / bucketed. This c…

Improved Search Queries stats for separate mobile sites

Webmaster Level: All

Search Queries in Webmaster Tools just became more cohesive for those who manage a mobile site on a separate URL from desktop, such as mobile on m.example.com and desktop on www. In Search Queries, when you view your m. site* and set Filters to “Mobile,” from Dec 31, 2013 onwards, you’ll now see:

- Queries where your m. pages appeared in search results for mobile browsers

- Queries where Google applied Skip Redirect. This means that, while search results displayed the desktop URL, the user was automatically directed to the corresponding m. version of the URL (thus saving the user from latency of a server-side redirect).

Prior to this Search Queries improvement, Webmaster Tools reported Skip Redirect impressions with the desktop URL. Now we’ve consolidated information when Skip Redirect is triggered, so that impressions, clicks, and CTR are calculated solely with the verified m. site, making your mobile statistics more understandable.

Best practices if you have a separate m. site

Here are a few search-friendly recommendations for those publishing content on a separate m. site:

- Follow our advice on Building Smartphone-Optimized Websites

- On the desktop page, add a special link rel=”alternate” tag pointing to the corresponding mobile URL. This helps Googlebot discover the location of your site’s mobile pages.

- On the mobile page, add a link rel=”canonical” tag pointing to the corresponding desktop URL.

- Use the

HTTP Vary: User-Agentheader if your servers automatically redirect users based on their user agent/device. - Verify ownership of both the desktop (www) and mobile (m.) sites in Webmaster Tools for improved communication and troubleshooting information specific to each site.

* Be sure you’ve verified ownership for your mobile site!

Written by Maile Ohye, Developer Programs Tech Lead

So long, 2013, and thanks for all the fish

Now that 2013 is almost over, we’d love to take a quick look back, and venture a glimpse into the future. Some of the important topics on our blog from 2013 were around mobile, internationalization, and search quality in general. Here are some of the m…

Switching to the new website verification API

Webmaster level: advanced Just over a year ago we introduced a new API for website verification for Google services. In the spirit of keeping things simple and focusing our efforts, we’ve decided to deprecate the old verification API method on Match 31…

Improving URL removals on third-party sites

Webmaster level: allContent on the Internet changes or disappears, and occasionally it’s helpful to have search results for it updated quickly. Today we launched our improved public URL removal tool to make it easier to request updates based on changes…

Structured Data dashboard: new markup error reports for easier debugging

Since we launched the Structured Data dashboard last year, it has quickly become one of the most popular features in Webmaster Tools. We’ve been working to expand it and make it even easier to debug issues so that you can see how Google understands the marked-up content on your site.

Starting today, you can see items with errors in the Structured Data dashboard. This new feature is a result of a collaboration with webmasters, whom we invited in June to>register as early testers of markup error reporting in Webmaster Tools. We’ve incorporated their feedback to improve the functionality of the Structured Data dashboard.

An “item” here represents one top-level structured data element (nested items are not counted) tagged in the HTML code. They are grouped by data type and ordered by number of errors:

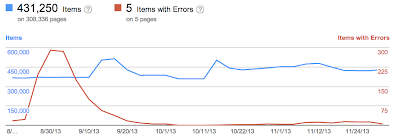

We’ve added a separate scale for the errors on the right side of the graph in the dashboard, so you can compare items and errors over time. This can be useful to spot connections between changes you may have made on your site and markup errors that are appearing (or disappearing!).

Our data pipelines have also been updated for more comprehensive reporting, so you may initially see fewer data points in the chronological graph.

How to debug markup implementation errors

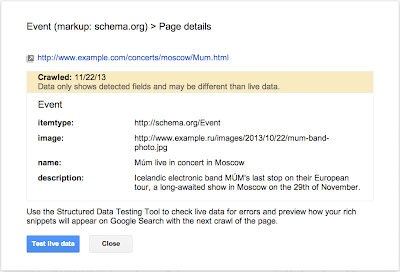

- To investigate an issue with a specific content type, click on it and we’ll show you the markup errors we’ve found for that type. You can see all of them at once, or filter by error type using the tabs at the top:

- Check to see if the markup meets the implementation guidelines for each content type. In our example case (events markup), some of the items are missing a

startDateornameproperty. We also surface missing properties for nested content types (e.g. a review item inside a product item) — in this case, this is thelowpriceproperty. - Click on URLs in the table to see details about what markup we’ve detected when we crawled the page last and what’s missing. You’ll can also use the “Test live data” button to test your markup in the Structured Data Testing Tool. Often when checking a bunch of URLs, you’re likely to spot a common issue that you can solve with a single change (e.g. by adjusting a setting or template in your content management system).

- Fix the issues and test the new implementation in the Structured Data Testing Tool. After the pages are recrawled and reprocessed, the changes will be reflected in the Structured Data dashboard.

We hope this new feature helps you manage the structured data markup on your site better. We will continue to add more error types in the coming months. Meanwhile, we look forward to your comments and questions here or in the dedicated Structured Data section of the Webmaster Help forum.

Posted by Mariya Moeva, Webmaster Trends Analyst

Checklist and videos for mobile website improvement

Webmaster Level: Intermediate to Advanced

Unsure where to begin improving your smartphone website? Wondering how to prioritize all the advice? We just published a checklist to help provide an efficient approach to mobile website improvement. Several topics in the checklist link to a relevant business case or study, other topics include a video explaining how to make data from Google Analytics and Webmaster Tools actionable during the improvement process. Copied below are shortened sections of the full checklist. Please let us know if there’s more you’d like to see, or if you have additional topics for us to include.

Step 1: Stop frustrating your customers

- Remove cumbersome extra windows from all mobile user-agents | Google recommendation, Article

- JavaScript pop-ups that can be difficult to close.

- Overlays, especially to download apps (instead consider a banner such as iOS 6+ Smart App Banners or equivalent, side navigation, email marketing, etc.).

- Survey requests prior to task completion.

- Provide device-appropriate functionality

- Remove features that require plugins or videos not available on a user’s device (e.g., Adobe Flash isn’t playable on an iPhone or on Android versions 4.1 and higher). | Business case

- Serve tablet users the desktop version (or if available, the tablet version). | Study

- Check that full desktop experience is accessible on mobile phones, and if selected, remains in full desktop version for duration of the session (i.e., user isn’t required to select “desktop version” after every page load). | Study

- Correct high traffic, poor user-experience mobile pages

How to correct high-traffic, poor user-experience mobile pages with data from Google Analytics bounce rate and events (slides)

- Make quick fixes in performance (and continue if behind competition) | Business case

To see all topics in “Stop frustrating your customers,” please see the full Checklist for mobile website improvement.

Step 2: Facilitate task completion

- Optimize crawling, indexing, and the searcher experience | Business case

- Unblock resources (CSS, JavaScript) that are robots.txt disallowed.

- Implement search-engine best practices given your mobile implementation:

- Responsive design: Be sure to include CSS

@mediaquery. - Separate mobile site: Add

rel=alternate mediaandrel=canonical, as well asVary: User-AgentHTTP Header which helps Google implement Skip Redirect. - Dynamic serving: Include

Vary: User-AgentHTTP header.

- Responsive design: Be sure to include CSS

- Optimize popular mobile persona workflows for your site

How to optimize popular mobile workflows using Google Webmaster Tools and Google Analytics (slides)

Step Three: Convert customers into fans!

- Consider search integration points with mobile apps | Announcement, Information

- Brainstorm new ways to provide value

- Build for mobile behavior, such as the in-store shopper. | Business case

- Leverage smartphone GPS, camera, accelerometer.

- Increase sharing or social behavior. | Business case

- Consider intuitive/fun tactile functionality with swiping, shaking, tapping.

Written by Maile Ohye, Developer Programs Tech Lead

Smartphone crawl errors in Webmaster Tools

Webmaster level: all

Some smartphone-optimized websites are misconfigured in that they don’t show searchers the information they were seeking. For example, smartphone users are shown an error page or get redirected to an irrelevant page, but desktop users are shown the content they wanted. Some of these problems, detected by Googlebot as crawl errors, significantly hurt your website’s user experience and are the basis of some of our recently-announced ranking changes for smartphone search results.

Starting today, you can use the expanded Crawl Errors feature in Webmaster Tools to help identify pages on your sites that show these types of problems. We’re introducing a new Smartphone errors tab where we share pages we’ve identified with errors only found with Googlebot for smartphones.

Some of the errors we share include:

-

Server errors: A server error is when Googlebot got an HTTP error status code when it crawled the page.

-

Not found errors and soft 404s: A page can show a “not found” message to Googlebot, either by returning an HTTP 404 status code or when the page is detected as a soft error page.

-

Faulty redirects: A faulty redirect is a smartphone-specific error that occurs when a desktop page redirects smartphone users to a page that is not relevant to their query. A typical example is when all pages on the desktop site redirect smartphone users to the homepage of the smartphone-optimized site.

-

Blocked URLs: A blocked URL is when the site’s robots.txt explicitly disallows crawling by Googlebot for smartphones. Typically, such smartphone-specific robots.txt disallow directives are erroneous. You should investigate your server configuration if you see blocked URLs reported in Webmaster Tools.

Fixing any issues shown in Webmaster Tools can make your site better for users and help our algorithms better index your content. You can learn more about how to build smartphone websites and how to fix common errors. As always, please ask in our forums if you have any questions.

Posted by Pierre Far, Webmaster Trends Analyst

Video: Creating a SEO strategy (with Webmaster Tools!)

Webmaster Level: Intermediate

Wondering how to begin creating an organic search strategy at your company? What’s a good way to integrate your company’s various online components, such as the website, blog, or YouTube channel? Perhaps we can help! In under fifteen minutes, I outline a strategic approach to SEO for a mock company, Webmaster Central, where I pretend to be the SEO managing the Webmaster Central Blog.

The video covers these high-level topics (and you can skip to the exact portion of the video that might be of interest):

Creating a SEO strategy

- Using Webmaster Central as mock company

- Building an SEO strategy

- Understand searcher persona workflow

- Determine company and website goals

- Audit your site to best reach your audience

- Execute and make improvements

Feel free to reference the slides as well.

Written by Maile Ohye, Developer Programs Tech Lead

Indexing apps just like websites

Searchers on smartphones experience many speed bumps that can slow them down. For example, any time they need to change context from a web page to an app, or vice versa, users are likely to encounter redirects, pop-up dialogs, and extra swipes and taps. Wouldn’t it be cool if you could give your users the choice of viewing your content either on the website or via your app, both straight from Google’s search results?

Today, we’re happy to announce a new capability of Google Search, called app indexing, that uses the expertise of webmasters to help create a seamless user experience across websites and mobile apps.

Just like it crawls and indexes websites, Googlebot can now index content in your Android app. Webmasters will be able to indicate which app content you’d like Google to index in the same way you do for webpages today — through your existing Sitemap file and through Webmaster Tools. If both the webpage and the app contents are successfully indexed, Google will then try to show deep links to your app straight in our search results when we think they’re relevant for the user’s query and if the user has the app installed. When users tap on these deep links, your app will launch and take them directly to the content they need. Here’s an example of a search for home listings in Mountain View:

We’re currently testing app indexing with an initial group of developers. Deep links for these applications will start to appear in Google search results for signed-in users on Android in the US in a few weeks. If you are interested in enabling indexing for your Android app, it’s easy to get started:

- Let us know that you’re interested. We’re working hard to bring this functionality to more websites and apps in the near future.

- Enable deep linking within your app.

- Provide information about alternate app URIs, either in the Sitemaps file or in a link element in pages of your site.

For more details on implementation and for information on how to sign up, visit our developer site. As always, if you have any questions, please ask in the mobile section of our webmaster forum.

Posted by Lawrence Chang, Product Manager

Easier recovery for hacked sites

We know that as a site owner, discovering your site is hacked with spam or malware is stressful, and trying to clean it up under a time constraint can be very challenging. We’ve been working to make recovery even easier and streamline the cleaning process — we notify webmasters when the software they’re running on their site is out of date, and we’ve set up a dedicated help portal for hacked sites with detailed articles explaining each step of the process to recovery, including videos.

Today, we’re happy to introduce a new feature in Webmaster Tools called Security Issues.

As a verified site owner, you’ll be able to:

- Find more information about the security issues on your site, in one place.

- Pinpoint the problem faster with detailed code snippets.

- Request review for all issues in one go through the new simpified process.

Find more information about the security issues on your site, in one place

Now, when we’ve detected your site may have been hacked with spam or with malware, we’ll show you everything in the same place for easy reference. Information that was previously available in the Malware section of Webmaster Tools, as well as new information about spam inserted by hackers, is now available in Security Issues. On the Security Issues main page, you’ll see the type of hacking, sample URLs if available, and the date when we last detected the issue.

Pinpoint the problem faster with detailed code snippets

Whenever possible, we’ll try to show you HTML and JavaScript code snippets from the hacked URLs and list recommended actions to help you clean up the specific type of hacking we’ve identified.

Request review for all issues in one go

We’ve also simplified requesting a review. Once you’ve cleaned your site and closed the security holes, you can request a review for all issues with one click of a button straight from the Security Issues page.

If you need more help, our updated and expanded help for hacked sites portal is now available in 22 languages. Let us know what you think in the comments here or at the Webmaster Help Forum.

Posted by Meenali Rungta, Webspam Team and Hadas Fester, Webmaster Tools Team

Video: Expanding your site to more languages

Webmaster Level: Intermediate to Advanced

We filmed a video providing more details about expanding your site to more languages or country-based language variations. The video covers details about rel=”alternate” hreflang and potential implementation on your multilingual and/or multinational site.

You can watch the entire video or skip to the relevant sections:

- Potential search issues with international sites

- Questions to ask within your company before beginning international expansion

- International site use cases

- rel=”alternate” hreflang and hreflang=”x-default”: details and implementation

- Best practices

Additional resources on hreflang include:

- Webmaster Help Center article on rel=”alternate” hreflang and hreflang=”x-default”

- More blog posts

- Working with multilingual sites

- Working with multiregional sites

- New markup for multilingual content

- Introducing “x-default hreflang” for international landing pages”

- Webmaster discussion forum FAQ on internationalization

- Webmaster discussion forum for internationalization (review answers or post your own question!)

Good luck as you expand your site to more languages!

Written by Maile Ohye, Developer Programs Tech Lead

Better backlink data for site owners

Webmaster level: intermediate

In recent years, our free Webmaster Tools product has provided roughly 100,000 backlinks when you click the “Download more sample links” button. Until now, we’ve selected those links primarily by lexicographical order. Th…

rel=”author” frequently asked (advanced) questions

Webmaster Level: Intermediate to Advanced

Using authorship helps searchers discover great information by highlighting content from authors who they might find interesting. If you’re an author, signing up for authorship will help users recognize content that you’ve written. Additionally, searchers can click the byline to see more articles you’ve authored or to follow you on Google+. It’s that simple! Well, except for several advanced questions that we’d like to help answer…

Authorship featured in search results from one of my favorite authors, John Mueller

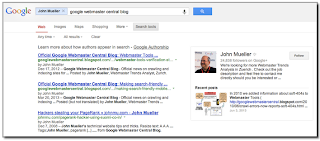

Clicking the author’s byline in search results can reveal more articles and a Google+ profile

Recent authorship questions

1. What kind of pages can be used with authorship?

Good question! You can increase the likelihood that we show authorship for your site by only using authorship markup on pages that meet these criteria:

- The URL/page contains a single article (or subsequent versions of the article) or single piece of content, by the same author. This means that the page isn’t a list of articles or an updating feed. If the author frequently switches on the page, then the annotation is no longer helpful to searchers and is less likely to be featured.

- The URL/page consists primarily of content written by the author.

- Showing a clear byline on the page, stating the author wrote the article and using the same name as used on their Google+ profile.

2. Can I use a company mascot as an author and have authorship annotation in search results? For my pest control business, I’d like to write as the “Pied Piper.”

You’re free to write articles in the manner you prefer — your users may really like the Pied Piper idea. However, for authorship annotation in search results, Google prefers to feature a human who wrote the content. By doing so, authorship annotation better indicates that a search result is the perspective of a person, and this helps add credibility for searchers.

Again, because currently we want to feature people, link authorship markup to an individual’s profile rather than linking to a company’s Google+ Page.

3. If I use authorship on articles available in different languages, such asexample.com/en/article1.html for English and example.com/fr/article1.html for the French translation,

should I link to two separate author/Google+ profiles written in each language?

In your scenario, both articles:

example.com/en/article1.html

andexample.com/fr/article1.html

should link to the same Google+ profile in the author’s language of choice.

4. Is it possible to add two authors for one article?

In the current search user interface, we only support one author per article, blog post, etc. We’re still experimenting to find the optimal outcome for searchers when more than one author is specified.

5. How can I prevent Google from showing authorship?

The fastest way to prevent authorship annotation is to make the author’s Google+ profile not discoverable in search results. Otherwise, if you still want to keep your profile in search results, then you can remove any profile or contributor links to the website, or remove the markup so that it no longer connects with your profile.

6. What’s the difference between rel=author vs rel=publisher?

rel=publisher helps a business create a shared identity by linking the business’ website (often from the homepage) to the business’ Google+ Page. rel=author helps individuals (authors!) associate their individual articles from a URL or website to their Google+ profile. While rel=author and rel=publisher are both link relationships, they’re actually completely independent of one another.

7. Can I use authorship on my site’s property listings or product pages since one of my employees has customized the description?

Authorship annotation is useful to searchers because it signals that a page conveys a real person’s perspective or analysis on a topic. Since property listings and product pages are less perspective/analysis oriented, we discourage using authorship in these cases. However, an article about products that provides helpful commentary, such as, “Camera X vs. Camera Y: Faceoff in the Arizona Desert” could have authorship.

If you have additional questions, don’t forget to check out (and even post your question if you don’t see it covered :) in the Webmaster Forum.

Written by Maile Ohye, Developer Programs Tech Lead

Making smartphone sites load fast

Webmaster level: Intermediate

Users tell us they use smartphones to search online because it’s quick and convenient, but today’s average mobile page typically takes more than 7 seconds to load. Wouldn’t it be great if mobile pages loaded in under one second? Today we’re announcing new guidelines and an updated PageSpeed Insights tool to help webmasters optimize their mobile pages for best rendering performance.

Prioritizing above-the-fold content

Research shows that users’ flow is interrupted if pages take longer than one second to load. To deliver the best experience and keep the visitor engaged, our guidelines focus on rendering some content, known as the above-the-fold content, to users in one second (or less!) while the rest of the page continues to load and render in the background. The above-the-fold HTML, CSS, and JS is known as the critical rendering path.

We can achieve sub-second rendering of the above-the-fold content on mobile networks by applying the following best practices:

- Server must render the response (< 200 ms)

- Number of redirects should be minimized

- Number of roundtrips to first render should be minimized

- Avoid external blocking JavaScript and CSS in above-the-fold content

- Reserve time for browser layout and rendering (200 ms)

- Optimize JavaScript execution and rendering time

These are explained in more details in the mobile-specific help pages, and, when you’re ready, you can test your pages and the improvements you make using the PageSpeed Insights

tool.

As always, if you have any questions or feedback, please post in our discussion group.

Posted by Bryan McQuade, Software Engineer, and Pierre Far, Webmaster Trends Analyst