How we fought Search spam on Google – Webspam Report 2019

Evaluating page experience for a better web

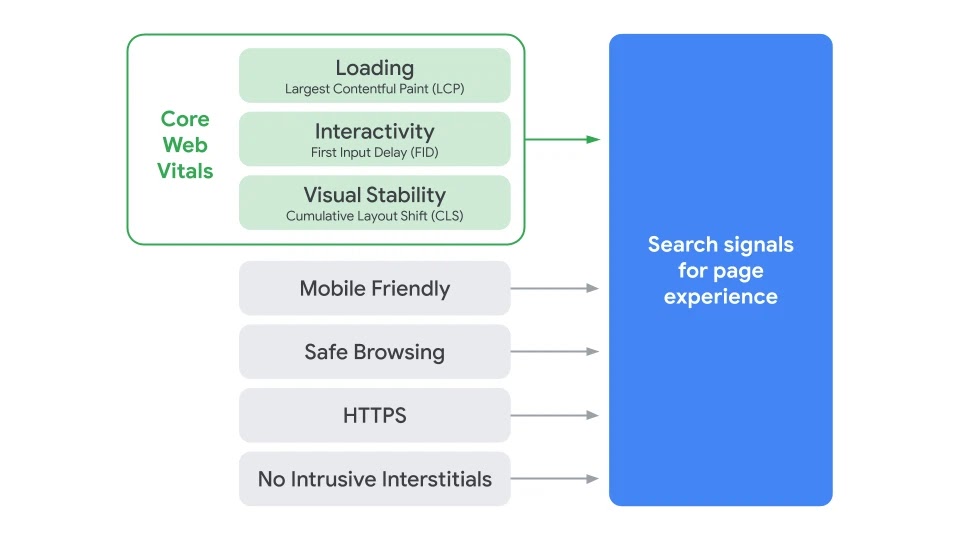

Through both internal studies and industry research, users show they prefer sites with a great page experience. In recent years, Search has added a variety of user experience criteria, such as how quickly pages load and mobile-friendliness, as factors for ranking results. Earlier this month, the Chrome team announced Core Web Vitals, a set of metrics related to speed, responsiveness and visual stability, to help site owners measure user experience on the web.

Today, we’re building on this work and providing an early look at an upcoming Search ranking change that incorporates these page experience metrics. We will introduce a new signal that combines Core Web Vitals with our existing signals for page experience to provide a holistic picture of the quality of a user’s experience on a web page.

As part of this update, we’ll also incorporate the page experience metrics into our ranking criteria for the Top Stories feature in Search on mobile, and remove the AMP requirement from Top Stories eligibility. Google continues to support AMP, and will continue to link to AMP pages when available. We’ve also updated our developer tools to help site owners optimize their page experience.

A note on timing: We recognize many site owners are rightfully placing their focus on responding to the effects of COVID-19. The ranking changes described in this post will not happen before next year, and we will provide at least six months notice before they’re rolled out. We’re providing the tools now to get you started (and because site owners have consistently requested to know about ranking changes as early as possible), but there is no immediate need to take action.

About page experience

The page experience signal measures aspects of how users perceive the experience of interacting with a web page. Optimizing for these factors makes the web more delightful for users across all web browsers and surfaces, and helps sites evolve towards user expectations on mobile. We believe this will contribute to business success on the web as users grow more engaged and can transact with less friction.

Core Web Vitals are a set of real-world, user-centered metrics that quantify key aspects of the user experience. They measure dimensions of web usability such as load time, interactivity, and the stability of content as it loads (so you don’t accidentally tap that button when it shifts under your finger – how annoying!).

We’re combining the signals derived from Core Web Vitals with our existing Search signals for page experience, including mobile-friendliness, safe-browsing, HTTPS-security, and intrusive interstitial guidelines, to provide a holistic picture of page experience. Because we continue to work on identifying and measuring aspects of page experience, we plan to incorporate more page experience signals on a yearly basis to both further align with evolving user expectations and increase the aspects of user experience that we can measure.

Page experience ranking

Great page experiences enable people to get more done and engage more deeply; in contrast, a bad page experience could stand in the way of a person being able to find the valuable information on a page. By adding page experience to the hundreds of signals that Google considers when ranking search results, we aim to help people more easily access the information and web pages they’re looking for, and support site owners in providing an experience users enjoy.

For some developers, understanding how their sites measure on the Core Web Vitals—and addressing noted issues—will require some work. To help out, we’ve updated popular developer tools such as Lighthouse and PageSpeed Insights to surface Core Web Vitals information and recommendations, and Google Search Console provides a dedicated report to help site owners quickly identify opportunities for improvement. We’re also working with external tool developers to bring Core Web Vitals into their offerings.

While all of the components of page experience are important, we will prioritize pages with the best information overall, even if some aspects of page experience are subpar. A good page experience doesn’t override having great, relevant content. However, in cases where there are multiple pages that have similar content, page experience becomes much more important for visibility in Search.

Page experience and the mobile Top Stories feature

The mobile Top Stories feature is a premier fresh content experience in Search that currently emphasizes AMP results, which have been optimized to exhibit a good page experience. Over the past several years, Top Stories has inspired new thinking about the ways we could promote better page experiences across the web.

When we roll out the page experience ranking update, we will also update the eligibility criteria for the Top Stories experience. AMP will no longer be necessary for stories to be featured in Top Stories on mobile; it will be open to any page. Alongside this change, page experience will become a ranking factor in Top Stories, in addition to the many factors assessed. As before, pages must meet the Google News content policies to be eligible. Site owners who currently publish pages as AMP, or with an AMP version, will see no change in behavior – the AMP version will be what’s linked from Top Stories.

Summary

We believe user engagement will improve as experiences on the web get better — and that by incorporating these new signals into Search, we’ll help make the web better for everyone. We hope that sharing our roadmap for the page experience updates and launching supporting tools ahead of time will help the diverse ecosystem of web creators, developers, and businesses to improve and deliver more delightful user experiences.

Frequently asked questions about JavaScript and links

We get lots of questions every day – in our Webmaster office hours, at conferences, in the webmaster forum and on Twitter. One of the more frequent themes among these questions are links and especially those generated through JavaScript.

In our Webmaster Conference Lightning Talks video series, we recently addressed the most frequently asked questions on Links and JavaScript:

Note: This video has subtitles in many languages available, too.

During the live premiere, we also had a Q&A session with a few additional questions from the community and decided to publish those questions and our answers along with some other frequently asked questions around the topic of links and JavaScript.

What kinds of links can Googlebot discover?

Googlebot parses the HTML of a page, looking for links to discover the URLs of related pages to crawl. To discover these pages, you need to make your links actual HTML links, as described in the webmaster guidelines on links.

What kind of URLs are okay for Googlebot?

Googlebot extracts the URLs from the href attribute of your links and then enqueues them for crawling. This means that the URL needs to be resolvable or simply put: The URL should work when put into the address bar of a browser. See the webmaster guidelines on links for more information.

Is it okay to use JavaScript to create and inject links?

As long as these links fulfill the criteria as per our webmaster guidelines and outlined above, yes.

When Googlebot renders a page, it executes JavaScript and then discovers the links generated from JavaScript, too. It’s worth mentioning that link discovery can happen twice: Before and after JavaScript executed, so having your links in the initial server response allows Googlebot to discover your links a bit faster.

Does Googlebot understand fragment URLs?

Fragment URLs, also known as “hash URLs”, are technically fine, but might not work the way you expect with Googlebot.

Fragments are supposed to be used to address a piece of content within the page and when used for this purpose, fragments are absolutely fine.

Sometimes developers decide to use fragments with JavaScript to load different content than what is on the page without the fragment. That is not what fragments are meant for and won’t work with Googlebot. See the JavaScript SEO guide on how the History API can be used instead.

Does Googlebot still use the AJAX crawling scheme?

The AJAX crawling scheme has long been deprecated. Do not rely on it for your pages.

The recommendation for this is to use the History API and migrate your web apps to URLs that do not rely on fragments to load different content.

Stay tuned for more Webmaster Conference Lightning Talks

This post was inspired by the first installment of the Webmaster Conference Lightning Talks, but make sure to subscribe to our YouTube channel for more videos to come! We definitely recommend joining the premieres on YouTube to participate in the live chat and Q&A session for each episode!

If you are interested to see more Webmaster Conference Lightning Talks, check out the video Google Monetized Policies and subscribe to our channel to stay tuned for the next one!

Join the webmaster community in the upcoming video premieres and in the YouTube comments!

Posted by Martin Splitt, Googlebot whisperer at the Google Search Relations team

Google Translate’s Website Translator – available for non-commercial use

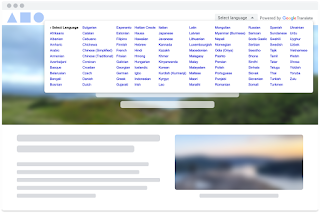

In this time of a global pandemic, webmasters across the world—from government officials to health organizations—are frequently updating their websites with the latest information meant to help fight the spread of COVID-19 and provide access to resources. However, they often lack the time or funding to translate this content into multiple languages, which can prevent valuable information from reaching a diverse set of readers. Additionally, some content may only be available via a file, e.g. a .pdf or .doc, which requires additional steps to translate.

To help these webmasters reach more users, we’re reopening access to the Google Translate Website Translator—a widget that translates web page content into 100+ different languages. It uses our latest machine translation technology, is easy to integrate, and is free of charge. To start using the Website Translator widget, sign up here.

Please note that usage will be restricted to government, non-profit, and/or non-commercial websites (e.g. academic institutions) that focus on COVID-19 response. For all other websites, we recommend using the Google Cloud Translation API.

Google Translate also offers both webmasters and their readers a way to translate documents hosted on a website. For example, if you need to translate this PDF file into Spanish, go to translate.google.com and enter the file’s URL into the left-hand textbox , then choose “Spanish” as the target language on the right. The link shown in the right-hand textbox will take you to the translated version of the PDF file. The following file formats are supported: .doc, .docx, .odf, .pdf, .ppt, .pptx, .ps, .rtf, .txt, .xls, or .xlsx.

Finally, it’s very important to note that while we continuously look for ways to improve the quality of our translations, they may not be perfect – so please use your best judgement when reading any content translated via Google Translate.

Posted by Xinxing Gu, Product Manager/Mountain View, CA, and Michal Lahav, User Experience Research/ Seattle, WA

New reports for Guided Recipes on Assistant in Search Console

Over the past two years, Google Assistant has helped users around the world cook yummy recipes and discover new favorites. During this time, web site owners have had to wait for Google to reprocess their web pages before they could see their updates on the Assistant. Along the way, we’ve heard many of you say you want an easier and faster way to know how the Assistant will guide users through a recipe.

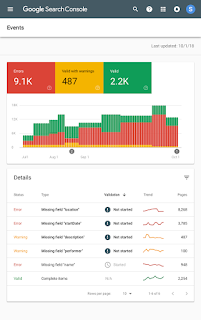

We are happy to announce support for Guided Recipes in Search Console as well as in the Rich Results Test tool. This will allow you to instantly validate the markup for individual recipes, as well as discover any issues with all of the recipes on your site.

Guided Recipes Enhancement report

In Search Console, you can now view a Rich Result Status Report with the errors, warnings, and fully valid pages found among your site’s recipes. It also includes a check box to show trends on search impressions, which can help in understanding the impact of your rich results appearances.

In addition, if you find an issue and fix it, you can use the report to notify Google that your page has changed and should be recrawled.

|

| Image: Guided Recipes Enhancement report |

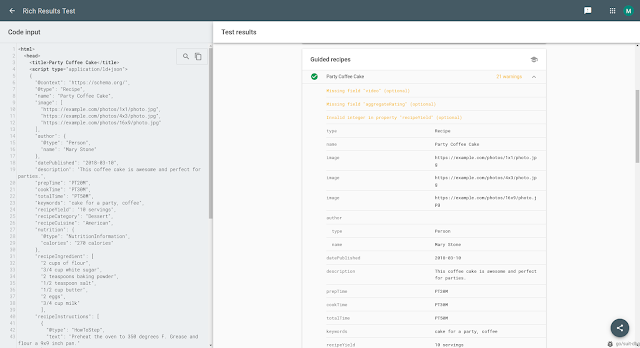

Guided Recipes in Rich Results Test

Add Guided Recipe structured data to a page and submit its URL – or just test a code snippet within the Rich Results Test tool. The test results show any errors or suggestions for your structured data as can be seen in the screenshot below.

|

| Image: Guided Recipes in Rich Results Test |

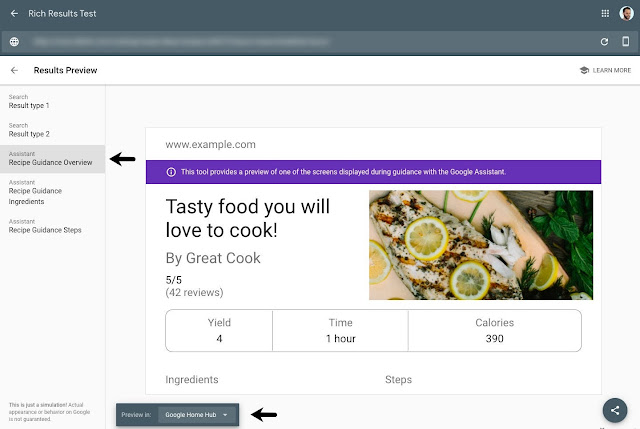

You can also use the Preview tool in the Rich Results Test to see how Assistant guidance for your recipe can appear on a smart display. You can find any issues with your markup before publishing it.

|

| Image: Guide Recipes preview in the Rich Results Test |

Let us know if you have any comments, questions or feedback either through the Webmasters help community or Twitter.

Posted by Earl J. Wagner, Software Engineer and Moshe Samet, Search Console Product Manager

Reintroducing a community for Polish & Turkish Site Owners

Google Webmaster Help forums are a great place for website owners to help each other, engage in friendly discussion, and get input from awesome Product Experts. We currently have forums operating in 12 languages.

We’re happy to announce the re-opening of the Polish and Turkish webmaster communities with support from a global team of Community Specialists dedicated to helping support Product Experts. If you speak Turkish or Polish, we’d love to have you drop by the new forums yourself, perhaps there’s a question or a challenge you can help with as well!

Current Webmaster Product Experts are welcome to join & keep their status in the new communities as well. If you have previously contributed and would like to start contributing again, you can start posting again, and feel free to ask others in the community if you have any questions.

We look forward to seeing you there!

Posted by Aaseesh Marina, Webmaster Product Support Manager

Przywracamy społeczność dla właścicieli witryn z Polski i Turcji

Fora pomocy dotyczące usług Google dla webmasterów są miejscem, w którym właściciele witryn mogą pomagać sobie nawzajem, dołączać do dyskusji i poznawać wskazówki Ekspertów Produktowych. Obecnie nasze fora działają w 12 językach.

Cieszymy się, że dzięki pomocy globalnego zespołu Specjalistów Społeczności, którzy z oddaniem wspierają Ekspertów Produktowych, możemy przywrócić społeczności webmasterów w językach polskim i tureckim. Jeśli posługujesz się którymś z tych języków, zajrzyj na nasze nowe fora. Może znajdziesz tam problem, który potrafisz rozwiązać?

Zapraszamy obecnych Ekspertów Produktowych usług Google dla webmasterów do dołączenia do nowych społeczności z zachowaniem dotychczasowego statusu. Jeśli chcesz, możesz wrócić do publikowania. W razie pytań zwróć się do innych członków społeczności.

Do zobaczenia!

Autor: Aaseesh Marina, Webmaster Product Support Manager

New reports for Special Announcements in Search Console

Last month we introduced a new way for sites to highlight COVID-19 announcements on Google Search. At first, we’re using this information to highlight announcements in Google Search from health and government agency sites, to cover important updates like school closures or stay-at-home directives.

Today we are announcing support for SpecialAnnouncement in Google Search Console, including new reports to help you find any issues with your implementation and monitor how this rich result type is performing. In addition we now also support the markup on the Rich Results Test to review your existing URLs or debug your markup code before moving it to production.

Special Announcements Enhancement report

A new report is now available in Search Console for sites that have implemented SpecialAnnouncement structured data. The report allows you to see errors, warnings, and valid pages for markup implemented on your site.

In addition, if you fix an issue, you can use the report to validate it, which will trigger a process where Google recrawls your affected pages. Learn more about the Rich result status reports.

|

| Image: Special Announcements Enhancement report |

Special Announcements appearance in Performance report

The Search Console Performance report now also allows you to see the performance of your SpecialAnnouncement marked-up pages on Google Search. This means that you can check the impressions, clicks and CTR results of your special announcement pages and check their performance to understand how they are trending for any of the dimensions available. Learn more about the Search appearance tab in the performance report.

|

| Image: Special Announcements appearance in Performance report |

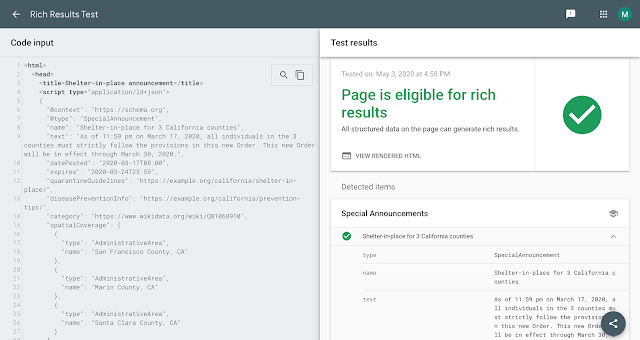

Special Announcements in Rich Results Test

After adding SpecialAnnouncement structured data to your pages, you can test them using the Rich Results Test tool. If you haven’t published the markup on your site yet, you can also upload a piece of code to check the markup. The test shows any errors or suggestions for your structured data.

|

| Image: Special Announcements in Rich Results Test |

These new tools should make it easier to understand how your marked-up SpecialAnnouncement pages perform on Search and to identify and fix issues.

If you have any questions, check out the Google Webmasters community.

Posted by Daniel Waisberg, Search Advocate & Moshe Samet, Search Console PM.

Showcasing the value of SEO

Each year we attend dozens of events and reach thousands of people with our keynotes, talks, and Q&As. We go to conferences and meetups, because we believe that our talks can potentially help online businesses flourish and we get to help people with their search related problems, but sometimes also listen to their success stories. It’s really uplifting when we hear that, by following our advice, they achieved something great!

We want people to hear about these success stories, so we’re starting a new blog post series that features case studies. They may, for example, help with convincing a boss’ boss that investing in SEO or implementing structured data can be good for the business.

In this first blog post we’re going to start with the overall basics of investing in Search Engine Optimization (SEO), and how investing in it helped a company.

We hope you’ll find this blog post useful. If you’re interested in contributing a case study, submit a talk proposal when signing up for a Webmaster Conference near you and we will consider featuring it. For more case studies and help content, head over to our developer site, help center, or YouTube channel. If you wanna get in touch with us, find us on Twitter.

Posted by Alice Kim and The Gary

Moon Tae Sung is a SEO Manager at Saramin, one of the largest job platforms in Korea. We had the opportunity to ask him a few questions about the effects of his team’s work on Google Search after a presentation he did at a Webmaster Conference in Seoul.

Saramin offers job posting recommendations, company and salary information, AI-based interviews, and AI-based headhunting services. According to Tae Sung, “people come to the Saramin site not only to look for jobs and submit applications, but to also gain a variety of information related to job searches and receive high-quality AI-based services for interview preparation.”

Saramin’s SEO process started with Google Search Console. In 2015 they verified the site in the tool and spent a year identifying and fixing crawling issues. “The task was simple, but still resulted in a 15% increase in the organic traffic“, Tae Sung said. The ROI prompted Saramin to invest more in SEO with the aim of even greater potential success. But first they needed to learn more about what else makes a site search engine friendly so they can better look for help resources. “We studied the Google Search developer’s guide and Help Center articles. These resources continue to provide up-to-date information for issues that we run into“, he told us.

SEO is a process that may take time to bear fruit, so they “started following the SEO guidelines more closely and implemented more changes. The goal was to make changes to the site so that Google Search would better understand it”, Tae Sung shared. They removed meta tags that were cluttered with unnecessary and unhelpful keywords, they used rel-canonical and removed duplicate content, and they explored the search gallery and applied applicable structured data, starting with Job Posting, Breadcrumb, and Estimated salary.

In addition, they used various Google tools offered as they worked on improving their site. “Errors on our structured data are dealt with by checking URLs on the Structured Data Testing Tool. Other tools like Mobile Friendly Test, AMP Test, and PageSpeed Insight provide us valuable insights for making improvements and helping us offer a better experience for our users,” said Tae Sung.

Over time, Saramin saw the red-colored errors on Search Console’s Index Coverage report gradually turning valid green, and they knew they were headed in the right direction. The incremental changes reached a tipping point and the traffic continued to rise at a more remarkable speed. In the peak hiring season of September 2019, traffic doubled compared to the previous year.

“We are very happy about the traffic increase, but what’s more exciting is it also accompanied improvement in the quality of the traffic. We saw a 93% increase in the number of new sign ups and a 9% increase on the conversion. We believe this means Saramin’s optimization work was found delightful by our users,” said Tae Sung.

Saramin continues to invest in achieving their SEO goals. They’re trying to enhance their users’ experience by implementing more technologies and features from Google, and Tae Sung is enthusiastic about their work ahead: “This is only the beginning of our story.”

Looking back at last year’s Webmaster Conference Product Summit

As a part of the Webmaster Conference series, last fall we held a Product Summit at Google’s headquarters in Mountain View, California. It was slightly different from our previous events, with a number of product managers and engineers from Google Search taking part. We recorded the talks held there, and are happy to be able to make these available to all of you now.

In the playlist you’ll find:

- Web deduplication – How does Google recognize duplicate content across the web? What happens once a duplicate is found? How is a canonical URL selected? How does localization play a role?

- Google Images best practices – Take a look at how Google Images has evolved over the years, and learn about some of the best practices that you can implement on your site when it comes to images.

- Rendering – Find out more about rendering, and what it takes to do rendering of the web at scale. Take a look behind the scenes, and learn about some things a site owner could watch out for with regards to rendering.

- Titles, snippets, and result previews – What’s the goal of titles, snippets, and previews in Search? How do Google’s systems pick and generate a preview for a page? What are some of the elements that help users decide which page to click in Search?

- Googlebot & web hosting – Starting with a look at the popularity of different web servers, and the growth of HTTPS, you’ll find out more about how Google’s crawling for Search works, and what you can do to control it.

- Claim your Knowledge Panel – Knowledge Panels are a great way for people and organizations to be visible in Search. Find out more about the ways you can claim and update them for yourself or for your business.

- Improving Search over the years – Are dogs the same as cats? Should pages about New York be shown when searching for York? How could algorithms ever figure this out? How many 😊’s does it take to get Google’s attention? Google’s Paul Haahr takes you on a tour of some changes in Search.

We hope you find these videos insightful, useful, and a bit entertaining! And if you are not subscribed to the Webmasters Youtube channel, here’s your chance!

Posted by John Mueller, Search Advocate, Google Switzerland

Introducing a new way for sites to highlight COVID-19 announcements on Google Search

Due to the COVID-19 outbreak, many organizations and groups are publishing important coronavirus-related announcements that affect our everyday lives.

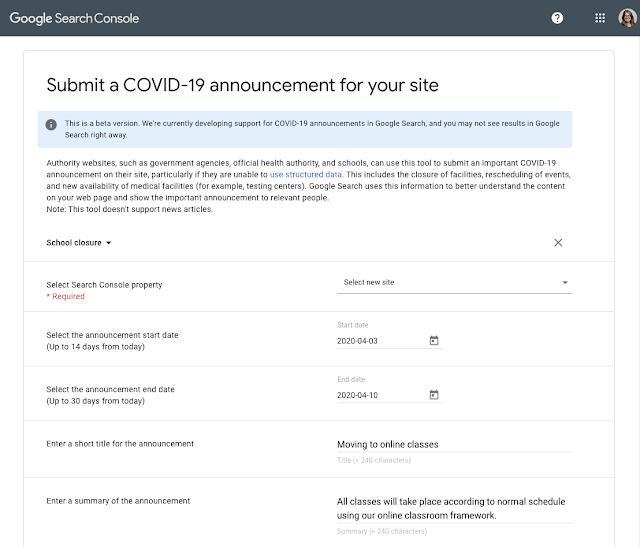

In response, we’re introducing a new way for these special announcements to be highlighted on Google Search. Sites can add SpecialAnnouncement structured data to their web pages or submit a COVID-19 announcement in Search Console.

At first, we’re using this information to highlight announcements in Google Search from health and government agency sites, to cover important updates like school closures or stay-at-home directives.

We are actively developing this feature, and we hope to expand it to include more sites. While we might not immediately show announcements from other types of sites, seeing the markup will help us better understand how to expand this feature.

Please note: beyond special announcements, there are a range of other options that sites can use to highlight information such as canceled events or changes to business hours. You can learn more about these at the end of this post.

How COVID-19 announcements appear in Search

When SpecialAnnouncement structured data is added to a page, that content can be eligible to appear with a COVID-19 announcement rich result, in addition to the page’s regular snippet description. A COVID-19 announcement rich result can contain a short summary that can be expanded to view more more. Please note that the format may change over time, and you may not see results in Google Search right away.

How to implement your COVID-19 announcements

There are two ways that you can implement your COVID-19 announcements.

RECOMMENDED: Add structured data to your web page

Structured data is a standardized format for providing information about a page and classifying the page content. We recommend using this method because it is the easiest way for us to take in this information, it enables reporting through Search Console in the future, and enables you to make updates. Learn how to add structured data to COVID-19 announcements.

ALTERNATIVE: Submit announcements in Search Console

If you don’t have the technical ability or support to implement structured data, you can submit a COVID-19 announcement in Search Console. This tool is still in beta testing, and you may see changes.

This method is not preferred and is intended only as a short-term solution. With structured data, your announcement highlights can automatically update when your pages change. With the tool, you’ll have to manually update announcements. Also, announcements made this way cannot be monitored through special reporting that will be made available through Search Console in the future.

If you do need to submit this way, you’ll need to first be verified in Search Console. Then you can submit a COVID-19 announcement:

More COVID-19 resources for sites from Google Search

Beyond special announcements markup, there are other ways you can highlight other types of activities that may be impacted because of COVID-19:

- Best practices for health and government sites: If you are a representative of a health or government website, and you have important information about coronavirus for the general public, here are some recommendations for how to make this information more visible on Google Search.

- Surface your common FAQs: If your site has common FAQs, adding FAQ markup can help Google Search surface your answers.

- Pausing your business online: See our blog post on how to pause your business online in a way that minimizes impacts with Google Search.

- Business hours & temporary closures: Review the guidance from Google My Business on how to change your business hours or indicate temporary closures or how to create COVID-19 posts.

- Events: If you hold events, look over the new properties for marking them virtual, postponed, or canceled.

- Knowledge Panels: Understand how to recommend changes to your Google knowledge panel (or how to claim it, if you haven’t already).

- Fix an overloaded server: Learn how to determine a server’s bottleneck, quickly fix the bottleneck, improve server performance, and prevent regressions.

If you have any questions or comments, please let us know on Twitter.

Posted by Lizzi Harvey, Technical Writer, Search Relations, and Danny Sullivan, Public Liaison for Search

Helping health organizations make COVID-19 information more accessible

Health organizations are busier than ever providing information to help with the COVID-19 pandemic. To better assist them, Google has created a best practices article to guide health organizations to make COVID-19 information more accessible on Search. We’ve also created a new technical support group for eligible health organizations.

Best practices for search visibility

By default, Google tries to show the most relevant, authoritative information in response to any search. This process is more effective when content owners help Google understand their content in appropriate ways.

To better guide health-related organizations in this process (known as SEO, for “search engine optimization”), we have produced a new help center article with some important best practices, with emphasis on health information sites, including:

-

How to help users access your content on the go

-

The importance of good page content and titles

-

Ways to check how your site appears for coronavirus-related queries

-

How to analyze the top coronavirus related user queries

-

How to add structured data for FAQ content

New support group for health organizations

In addition to our best practices help page, health organizations can take part in our new technical support group that’s focused on helping health organizations who publish COVID-19 information with Search related questions.

We’ll be approving requests for access on a case-by-case basis. At first we’ll be accepting only domains under national health ministries and US state level agencies. We’ll inform of future expansions here in this blog post, and on our Twitter account. You’ll need to register using either an email under those domains (e.g. name@health.gov) or have access to the website Search Console account.

Fill this form to request access to the COVID-19 Google Search group

The group was created to respond to the current needs of health organizations, and we intend to deprecate the group as soon as COVID-19 is no longer considered a Public Health Emergency by WHO or some similar deescalation is widely in place.

Everyone is welcome to use our existing webmaster help forum, and if you have any questions or comments, please let us know on Twitter.

Posted by Daniel Waisberg, Search Advocate & Ofir Roval, Search Console Lead PM

How to pause your business online in Google Search

As the effects of the coronavirus grow, we’ve seen businesses around the world looking for ways to pause their activities online. With the outlook of coming back and being present for your customers, here’s an overview of our recommendations of how to pause your business online and minimize impacts with Google Search. These recommendations are applicable to any business with an online presence, but particularly for those who have paused the selling of their products or services online. For more detailed information, also check our developer documentation.

Recommended: limit site functionality

If your situation is temporary and you plan to reopen your online business, we recommend keeping your site online and limiting the functionality. For example, you might mark items as out of stock, or restrict the cart and checkout process. This is the recommended approach since it minimizes any negative effects on your site’s presence in Search. People can still find your products, read reviews, or add wishlists so they can purchase at a later time.

It’s also a good practice to:

- Disable the cart functionality: Disabling the cart functionality is the simplest approach, and doesn’t change anything for your site’s visibility in Search.

- Tell your customers what’s going on: Display a banner or popup div with appropriate information for your users, so that they’re aware of the business’s status. Mention any known and unusual delays, shipping times, pick-up or delivery options, etc. upfront, so that users continue with the right expectations. Make sure to follow our guidelines on popups and banners.

- Update your structured data: If your site uses structured data (such as Products, Books, Events), make sure to adjust it appropriately (reflecting the current product availability, or changing events to cancelled). If your business has a physical storefront, update Local Business structured data to reflect current opening hours.

- Check your Merchant Center feed: If you use Merchant Center, follow the best practices for the availability attribute.

- Tell Google about your updates: To ask Google to recrawl a limited number of pages (for example, the homepage), use Search Console. For a larger number of pages (for example, all of your product pages), use sitemaps.

For more information, check our developers documentation.

Not recommended: disabling the whole website

As a last resort, you may decide to disable the whole website. This is an extreme measure that should only be taken for a very short period of time (a few days at most), as it will otherwise have significant effects on the website in Search, even when implemented properly. That’s why it’s highly recommended to only limit your site’s functionality instead. Keep in mind that your customers may also want to find information about your products, your services, and your company, even if you’re not selling anything right now.

If you decide that you need to do this (again, which we don’t recommend), here are some options:

- If you need to urgently disable the site for 1-2 days, then return an informational error page with a 503 HTTP result code instead of all content. Make sure to follow the best practices for disabling a site.

- If you need to disable the site for a longer time, then provide an indexable homepage as a placeholder for users to find in Search by using the 200 HTTP status code.

- If you quickly need to hide your site in Search while you consider the options, you can temporarily remove it from Search.

For more information, check our developers documentation.

Proceed with caution: To elaborate why we don’t recommend disabling the whole website, here are some of the side effects:

- Your customers won’t know what’s happening with your business if they can’t find your business online at all.

- Your customers can’t find or read first-hand information about your business and its products & services. For example, reviews, specs, past orders, repair guides, or manuals won’t be findable. Third-party information may not be as correct or comprehensive as what you can provide. This often also affects future purchase decisions.

- Knowledge Panels may lose information, like contact phone numbers and your site’s logo.

- Search Console verification will fail, and you will lose all access to information about your business in Search. Aggregate reports in Search Console will lose data as pages are dropped from the index.

- Ramping back up after a prolonged period of time will be significantly harder if your website needs to be reindexed first. Additionally, it’s uncertain how long this would take, and whether the site would appear similarly in Search afterwards.

Other things to consider

Beyond the operation of your web site, there are other actions you might want to take to pause your online business in Google Search:

- If you hold events, look over the new properties for making them virtual, postponed or canceled.

- Review the guidance from Google My Business on how to change your business hours or indicate temporary closures.

- Review the resources from Google for Small Business on how to communicate with customers and employees, for working remotely and modifying advertising campaigns.

- Understand how to recommend changes to your Google knowledge panel (or how to claim it, if you haven’t already).

Also be sure to keep up with the latest by following updates on Twitter from Google Webmasters at @GoogleWMC and Google My Business at @GoogleMyBiz.

FAQs

What if I only close the site for a few weeks?

Completely closing a site even for just a few weeks can have negative consequences on Google’s indexing of your site. We recommend limiting the site functionality instead. Keep in mind that users may also want to find information about your products, your services, and your company, even if you’re currently not selling anything.

What if I want to exclude all non-essential products?

That’s fine. Make sure that people can’t buy the non-essential products by limiting the site functionality.

Can I ask Google to crawl less during this time?

Yes, you can limit crawling with Search Console, though it’s not recommended for most cases. This may have some impact on the freshness of your results in Search. For example, it may take longer for Search to reflect that all of your products are currently not available. On the other hand, if Googlebot’s crawling causes critical server resource issues, this is a valid approach. We recommend setting a reminder for yourself to reset the crawl rate once you start planning to go back in business.

How do I get a page indexed or updated quickly?

To ask Google to recrawl a limited number of pages (for example, the homepage), use Search Console. For a larger number of pages (for example, all of your product pages), use sitemaps.

What if I block a specific region from accessing my site?

Google generally crawls from the US, so if you block the US, Google Search generally won’t be able to access your site at all. We don’t recommend that you block an entire region from temporarily accessing your site; instead, we recommend limiting your site’s functionality for that region.

Should I use the Removals Tool to remove out-of-stock products?

No. People won’t be able to find first-hand information about your products on Search, and there might still be third-party information for the product that may be incorrect or incomplete. It’s better to still allow that page, and mark it out of stock. That way people can still understand what’s going on, even if they can’t purchase the item. If you remove the product from Search, people don’t know why it’s not there.

——–

We realize that any business closure is a big and stressful step, and not everyone will know what to do. If you notice afterwards that you could have done something differently, everything’s not lost: we try to make our systems robust so that your site will be back in Search as quickly as possible. Like you, we’re hoping that this crisis finds an end as soon as possible. We hope that with this information, you’re able to have your online business up & running quickly when that time comes. Should you run into any problems or questions along the way, please don’t hesitate to use our public channels to get help.

Posted by John Mueller, working from home in Zurich, Switzerland

New properties for virtual, postponed, and canceled events

In the current environment and status of COVID-19 around the world, many events are being canceled, postponed, or moved to an online-only format. Google wants to show users the latest, most accurate information about your events in this fast-changing e…

Coronavirus (COVID-19) and Webmaster Conference

Last year we organized Webmaster Conference events in over 15 countries. The spirit of WMConf is reflected in that number: we want to reach regions that otherwise don’t get much search conference love. Recently we have been monitoring the coronavirus (COVID-19) situation and its impact on planning this year’s events.

To wit, with the growing concern around coronavirus and in line with the travel guidelines published by WHO, the CDC, and other organizations, we are postponing all Webmaster Conference events globally. While we’re hoping to organize events later this year, we’re also exploring other ways to reach targeted audiences.

We’re very sorry to delay the opportunity to connect in person, but we feel strongly that the safety and health of all attendees is the priority at this time. If you want to get notified about future events in your region, you can sign up to receive updates on the Webmaster Conference site. If you have questions or comments, catch us on Twitter!

Posted by Cherry Prommawin and Gary

Announcing mobile first indexing for the whole web

It’s been a few years now that Google started working on mobile-first indexing – Google’s crawling of the web using a smartphone Googlebot. From our analysis, most sites shown in search results are good to go for mobile-first indexing, and 70% of those shown in our search results have already shifted over. To simplify, we’ll be switching to mobile-first indexing for all websites starting September 2020. In the meantime, we’ll continue moving sites to mobile-first indexing when our systems recognize that they’re ready.

When we switch a domain to mobile-first indexing, it will see an increase in Googlebot’s crawling, while we update our index to your site’s mobile version. Depending on the domain, this change can take some time. Afterwards, we’ll still occasionally crawl with the traditional desktop Googlebot, but most crawling for Search will be done with our mobile smartphone user-agent. The exact user-agent name used will match the Chromium version used for rendering.

In Search Console, there are multiple ways to check for mobile-first indexing. The status is shown on the settings page, as well as in the URL Inspection Tool, when checking a specific URL with regards to its most recent crawling.

Our guidance on making all websites work well for mobile-first indexing continues to be relevant, for new and existing sites. In particular, we recommend making sure that the content shown is the same (including text, images, videos, links), and that meta data (titles and descriptions, robots meta tags) and all structured data is the same. It’s good to double-check these when a website is launched or significantly redesigned. In the URL Testing Tools you can easily check both desktop and mobile versions directly. If you use other tools to analyze your website, such as crawlers or monitoring tools, use a mobile user-agent if you want to match what Google Search sees.

While we continue to support various ways of making mobile websites, we recommend responsive web design for new websites. We suggest not using separate mobile URLs (often called “m-dot”) because of issues and confusion we’ve seen over the years, both from search engines and users.

Mobile-first indexing has come a long way. It’s great to see how the web has evolved from desktop to mobile, and how webmasters have helped to allow crawling & indexing to match how users interact with the web! We appreciate all your work over the years, which has helped to make this transition fairly smooth. We’ll continue to monitor and evaluate these changes carefully. If you have any questions, please drop by our Webmaster forums or our public events.

Posted by John Mueller, Developer Advocate, Google Zurich

Best Practices for News coverage with Search

Having up-to-date information during large, public events is critical, as the landscape changes by the minute. This guide highlights some tools that news publishers can use to create a data rich and engaging experience for their users.

Add Article structured data to AMP pages

Adding Article structured data to your news, blog, and sports article AMP pages can make the content eligible for an enhanced appearance in Google Search results. Enhanced features may include placement in the Top stories carousel, host carousel, and Visual stories. Learn how to mark up your article.

You can now test and validate your AMP article markup in the Rich Results Test tool. Enter your page’s URL or a code snippet, and the Rich Result Test shows the AMP Articles that were found on the page (as well as other rich result types), and any errors or suggestions for your AMP Articles. You can also save the test history and share the test results.

We also recommend that you provide a publication date so that Google can expose this information in Search results, if this information is considered to be useful to the user.

Mark up your live-streaming video content

If you are live-streaming a video during an event, you can be eligible for a LIVE badge by marking your video with BroadcastEvent. We strongly recommend that you use the Indexing API to ensure that your live-streaming video content gets crawled and indexed in a timely way. The Indexing API allows any site owner to directly notify Google when certain types of pages are added or removed. This allows Google to schedule pages for a fresh crawl, which can lead to more relevant user traffic as your content is updated. For websites with many short-lived pages like livestream videos, the Indexing API keeps content fresh in search results. Learn how to get started with the Indexing API.

For AMP pages: Update the cache and use components

Use the following to ensure your AMP content is published and up-to-date the moment news breaks.

Update the cache

When people click an AMP page, the Google AMP Cache automatically requests updates to serve fresh content for the next person once the content has been cached. However, if you want to force an update to the cache in response to a change in the content on the origin domain, you can send an update request to the Google AMP Cache. This is useful if your pages are changing in response to a live news event.

Use news-related AMP components

- <amp-live-list>: Add live content to your article and have it updated based on a source document. This is a great choice if you just want content to reload easily, without having to set up or configure additional services on your backend. Learn how to implement <amp-live-list>.

- <amp-script>: Run your own JavaScript inside of AMP pages. This flexibility means that anything you are publishing on your desktop or non-AMP mobile pages, you can bring over to AMP. <amp-script> supports Websockets, interactive SVGs, and more. This allows you to create engaging news pages like election coverage maps, live graphs and polls etc. As a newer feature, the AMP team is actively soliciting feedback on it. If for some reason it doesn’t work for your use case, let us know.

If you have any questions, let us know through the forum or on Twitter.

Posted by Patrick Kettner and Naina Raisinghani, AMP team

More & better data export in Search Console

We have heard users ask for better download capabilities in Search Console loud and clear – so we’re happy to let you know that more and better data is available to export.

You’ll now be able to download the complete information you see in almost all Search Console reports (instead of just specific table views). We believe that this data will be much easier to read outside SC and store it for your future reference (if needed). You’ll find a section at the end of this post describing other ways to use your Search Console data outside the tool.

Enhancement reports and more

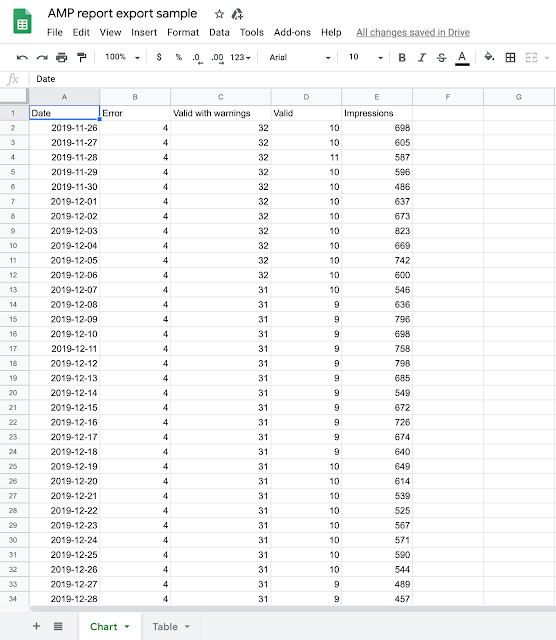

When exporting data from a report, for example AMP status, you’ll now be able to export the data behind the charts, not only the details table (as previously). This means that in addition to the list of issues and their affected pages, you’ll also see a daily breakdown of your pages, their status, and impressions received by them on Google Search results. If you are exporting data from a specific drill-down view, you can see the details describing this view in the exported file.

If you choose Google Sheets or Excel (new!) you’ll get a spreadsheet with two tabs, and if you choose to download as csv, you’ll get a zip file with two csv files.

Here is a sample dataset downloaded from the AMP status report. We changed the titles of the spreadsheet to be descriptive for this post, but the original title includes the domain name, the report, and the date of the export.

Performance report

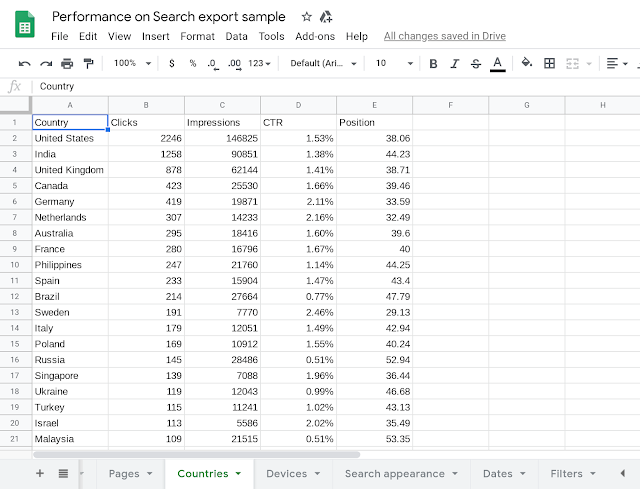

When it comes to Performance data, we have two improvements:

-

You can now download the content of all tabs with one click. This means that you’ll now get the data on Queries, Pages, Countries, Devices, Search appearances and Dates, all together. The download output is the same as explained above, Google sheets or Excel spreadsheet with multiple tabs and csv files compressed in a zip file.

-

Along with the performance data, you’ll have an extra tab (or csv file) called “Filters”, which shows which filters were applied when the data was exported.

Here is a sample dataset downloaded from the Performance report.

Additional ways to use Search Console data outside the tool

Since we’re talking about exporting data, we thought we’d take the opportunity to talk about other ways you can currently use Search Console data outside the tool. You might want to do this if you have a specific use case that is important to your company, such as joining the data with another dataset, performing an advanced analysis, or visualizing the data in a different way.

There are two options, depending on the data you want and your technical level.

Search Console API

If you have a technical background, or a developer in your company can help you, you might consider using the Search Console API to view, add, or remove properties and sitemaps, and to run advanced queries for Google Search results data.

We have plenty of documentation on the subject, but here are some links that might be useful to you if you’re starting now:

-

The Overview and prerequisites guide walks you through the things you should do before writing your first client application. You’ll also find more advanced guides in the sidebar of this section, for example a guide on how to query all your search data.

-

The reference section provides details on query parameters, usage limits and errors returned by the API.

-

The API samples provides links to sample code for several programming languages, a great way to get up and running.

Google Data Studio

Google Data Studio is a dashboarding solution that helps you unify data from different data sources, explore it, and tell impactful data stories. The tool provides a Search Console connector to import various metrics and dimensions into your dashboard. This can be valuable if you’d like to see Search Console data side-by-side with data from other tools.

If you’d like to give it a try, you can use this template to visualize your data – click “use template” at the top right corner of the page to connect to your data. To learn more about how to use the report and which insights you might find in it, check this step-by-step guide. If you just want to play with it, here’s a report based on that template with sample data.

Let us know on Twitter if you have interesting use cases or comments about the new download capabilities, or about using Search Console data in general. And enjoy the enhanced data!

Posted by Sion Schori & Daniel Waisberg, Search Console team

How to showcase your events on Google Search

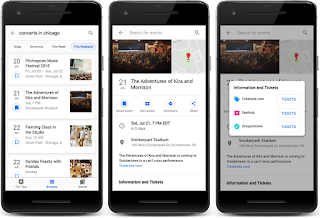

It’s officially 2020 and people are starting to make plans for the year ahead. If you produce any type of event, you can help people discover your events with the event search experience on Google.

Have a concert or hosting a workshop? Event markup allows people to discover your event when they search for “concerts this weekend” or “workshops near me.” People can also discover your event when they search for venues, such as sports stadiums or a local pub. Events may surface in a given venue’s Knowledge Panel to better help people find out what’s happening at that respective location.

Launching in new regions and languages

We recently launched the event search experience in Germany and Spain, which brings the event search experience on Google to nine countries and regions around the world. For a full list of where the event search experience works, check out the list of available languages and regions.

How to get your events on Google

There are three options to make your events eligible to appear on Google:

- If you use a third-party website to post events (for example, you post events on ticketing websites or social platforms), check to see if your event publisher is already participating in the event search experience on Google. One way to check is to search for a popular event shown on the platform and see if the event listing is shown. If your event publisher is integrated with Google, continue to post your events on the third-party website.

- If you use a CMS (for example, WordPress) and you don’t have access to your HTML, check with your CMS to see if there’s a plugin that can add structured data to your site for you. Alternatively, you can use the Data Highlighter to tell Google about your events without editing the HTML of your site.

- If you’re comfortable editing your HTML, use structured data to directly integrate with Google. You’ll need to edit the HTML of the event pages.

Follow best practices

If you’ve already implemented event structured data, we recommend that you review your structured data to make sure it meets our guidelines. In particular, you should:

- Make sure you’re including the required and recommended properties that are outlined in our developer guidelines.

- Make sure your event details are high quality, as defined by our guidelines. For example, use the description field to describe the event itself in more detail instead of repeating attributes such as title, date, location, or highlighting other website functionality.

- Use the Rich Result Test to test and preview your structured data.

Monitor your performance on Search

You can check how people are interacting with your event postings with Search Console:

- Use the Performance Report in Search Console to show event listing or detail view data for a given event posting in Search results. You can automatically pull these results with the Search Console API.

- Use the Rich result status report in Search Console to understand what Google could or could not read from your site, and troubleshoot rich result errors.

If you have any questions, please visit the Webmaster Central Help Forum.

Posted by Emily Fifer, Product Manager

New reports for review snippets in Search Console

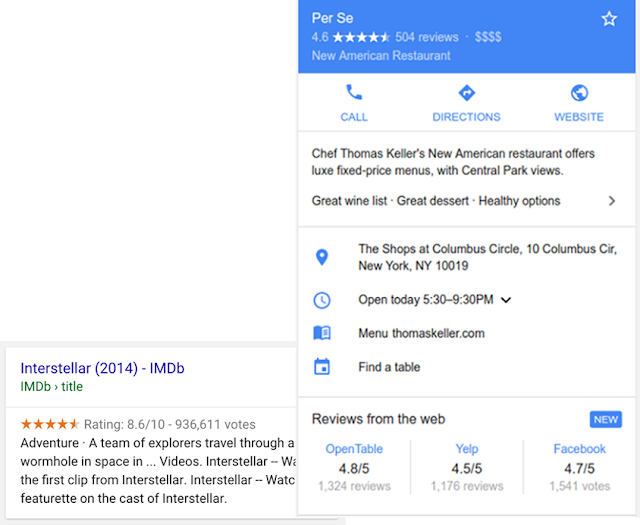

When Google finds valid reviews or ratings markup, we may show a rich result that includes stars and other summary info. This rich result can appear directly on search results or as part of a Google Knowledge panel, as shown in the screenshots below.

Today we are announcing support for review snippets in Google Search Console, including new reports to help you find any issues with your implementation and monitor how this rich result type is improving your performance. You can also use the Rich Results Test to review your existing URLs or debug your markup code before moving it to production.

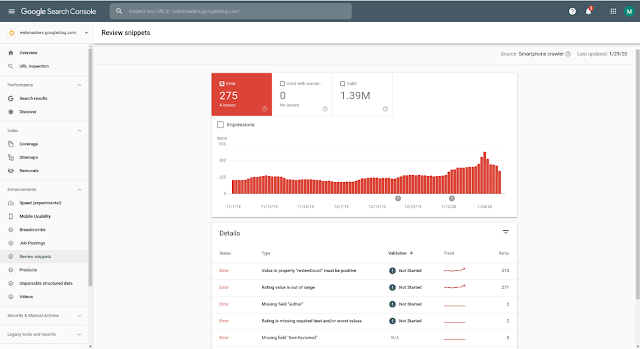

Review snippet Enhancement report

To help site owners make the most of their reviews, a new review snippet report is now available in Search Console for sites that have implemented reviews or ratings structured data. The report allows you to see errors, warnings, and valid pages for markup implemented on your site.

In addition, if you fix an issue, you can use the report to validate it, which will trigger a process where Google recrawls your affected pages. The report is covering all the content types currently supported as review snippets. Learn more about the Rich result status reports.

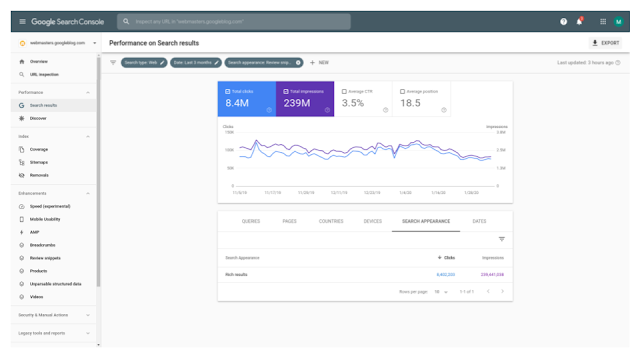

Review snippet appearance in Performance report

The Search Console Performance report now allows you to see the performance of your review or rating marked-up pages on Google Search and Discover using the new “Review snippet” search appearance filter.

This means that you can check the impressions, clicks and CTR results of your review snippet pages and check their performance to understand how they are trending for any of the dimensions available. For example you can filter your data to see which queries, pages, countries and devices are bringing your review snippets traffic.

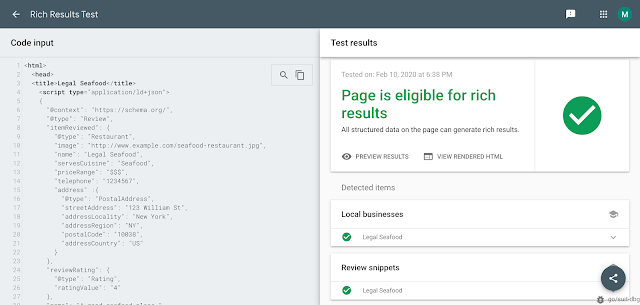

Review snippet in Rich Results Test

These new tools should make it easier to understand how your marked-up review snippet pages perform on Search and to identify and fix review issues.

If you have any questions, check out the Google Webmasters community.

Posted by Tomer Hodadi and Yuval Kurtser, Search Console engineering team