Structured Data dashboard: new markup error reports for easier debugging

Since we launched the Structured Data dashboard last year, it has quickly become one of the most popular features in Webmaster Tools. We’ve been working to expand it and make it even easier to debug issues so that you can see how Google understands the marked-up content on your site.

Starting today, you can see items with errors in the Structured Data dashboard. This new feature is a result of a collaboration with webmasters, whom we invited in June to>register as early testers of markup error reporting in Webmaster Tools. We’ve incorporated their feedback to improve the functionality of the Structured Data dashboard.

An “item” here represents one top-level structured data element (nested items are not counted) tagged in the HTML code. They are grouped by data type and ordered by number of errors:

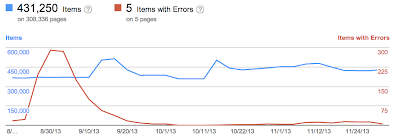

We’ve added a separate scale for the errors on the right side of the graph in the dashboard, so you can compare items and errors over time. This can be useful to spot connections between changes you may have made on your site and markup errors that are appearing (or disappearing!).

Our data pipelines have also been updated for more comprehensive reporting, so you may initially see fewer data points in the chronological graph.

How to debug markup implementation errors

- To investigate an issue with a specific content type, click on it and we’ll show you the markup errors we’ve found for that type. You can see all of them at once, or filter by error type using the tabs at the top:

- Check to see if the markup meets the implementation guidelines for each content type. In our example case (events markup), some of the items are missing a

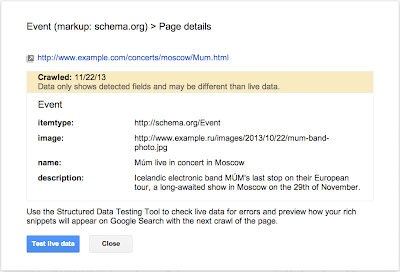

startDateornameproperty. We also surface missing properties for nested content types (e.g. a review item inside a product item) — in this case, this is thelowpriceproperty. - Click on URLs in the table to see details about what markup we’ve detected when we crawled the page last and what’s missing. You’ll can also use the “Test live data” button to test your markup in the Structured Data Testing Tool. Often when checking a bunch of URLs, you’re likely to spot a common issue that you can solve with a single change (e.g. by adjusting a setting or template in your content management system).

- Fix the issues and test the new implementation in the Structured Data Testing Tool. After the pages are recrawled and reprocessed, the changes will be reflected in the Structured Data dashboard.

We hope this new feature helps you manage the structured data markup on your site better. We will continue to add more error types in the coming months. Meanwhile, we look forward to your comments and questions here or in the dedicated Structured Data section of the Webmaster Help forum.

Posted by Mariya Moeva, Webmaster Trends Analyst

The Test Continues: Which Shopping Search Engine Finds The Best Price For A Blu-Ray?

It’s time for our second test of how well Google Shopping and other shopping search engines find the best prices on products. For this installment, what’s the lowest price from a major retailer for a copy of “Red 2″ in the Blu-ray, DVD and digital download combo pack? Is The…

Please visit Search Engine Land for the full article.

3 Components Of Geo-Targeting Excellence For 2014

Smart use of the geo-targeting controls through Enhanced Campaigns in AdWords will prove to be the single largest opportunity for paid search marketers in 2014. Geo-targeting is nothing new. It has been a foundational element for local and regional bus…

The Google Places Dashboard And Listing Ownership

The old Google Places for Business Dashboard allowed a listing to be verified into multiple accounts. The new Places for Business Dashboard only allows one verification per listing. This is a huge difference process. It is also an impediment to many listings being moved over to the new dashboard. Given the ambiguity of ownership in […]

Localization for International Search Engine Optimization

Localization helps connect your brand to a location using the words, terms, and behaviors of an audience in a particular region. While every country is different, here are some ideas for how to approach a localization strategy in a new country.

Reports: NSA Used Google Cookies for Data Spying

Just a few days after they ganged up on the government to ask them to curb the NSA’s spying enthusiasm, tech giants are faced with another rather inconvenient truth: that the NSA is actually piggy-backing on Google cookies to gather intelligence.

SEO Discussions That Need to Die

Sometimes the SEO industry feels like one huge Groundhog Day. No matter how many times you have discussions with people on the same old topics, these issues seem to pop back into blogs/social media streams with almost regular periodicity. And every time it does, just the authors are new, the arguments and the contra arguments are all the same.

Due to this sad situation, I have decided to make a short list of such issues/discussions and hopefully if one of you is feeling particularly inspired by it and it prevents you from starting/engaging in such a debate, then it was worth writing.

So here are SEO’s most annoying discussion topics, in no particular order:

Blackhat vs. Whitehat

This topic has been chewed over and over again so many times, yet people still jump into it with both their feet, having righteous feeling that their, and no one else’s argument is going to change someone’s mind. This discussion is becomes particularly tiresome when people start claiming moral high ground because they are using one over the other. Let’s face it once and for all times: there are no generally moral (white) and generally immoral (black) SEO tactics.

This topic has been chewed over and over again so many times, yet people still jump into it with both their feet, having righteous feeling that their, and no one else’s argument is going to change someone’s mind. This discussion is becomes particularly tiresome when people start claiming moral high ground because they are using one over the other. Let’s face it once and for all times: there are no generally moral (white) and generally immoral (black) SEO tactics.

This is where people usually pull out the argument about harming clients’ sites, argument which is usually moot. Firstly, there is a heated debate about what is even considered whitehat and what blackhat. Definition of these two concepts is highly fluid and changes over time. One of the main reasons for this fluidity is Google moving the goal posts all the time. What was once considered purely whitehat technique, highly recommended by all the SEOs (PR submissions, directories, guest posts, etc.) may as of tomorrow become “blackhat”, “immoral” and what not. Also some people consider “blackhat” anything that dares to not adhere to Google Webmaster Guidelines as if it was carved in on stone tablets by some angry deity.

Just to illustrate how absurd this concept is, imagine some other company, Ebay say, creates a list of rules, one of which is that anyone who wants to sell an item on their site, is prohibited from trying to sell it also on Gumtree or Craigslist. How many of you would practically reduce the number of people your product is effectively reaching because some other commercial entity is trying to prevent competition? If you are not making money off search, Google is and vice versa.

It is not about the morals, it is not about criminal negligence of your clients. It is about taking risks and as long as you are being truthful with your clients and yourself and aware of all the risks involved in undertaking this or some other activity, no one has the right to pontificate about “morality” of a competing marketing strategy. If it is not for you, don’t do it, but you can’t both decide that the risk is too high for you while pseudo-criminalizing those who are willing to take that risk.

The same goes for “blackhatters” pointing and laughing at “whitehatters”. Some people do not enjoy rebuilding their business every 2 million comment spam links. That is OK. Maybe they will not climb the ranks as fast as your sites do, but maybe when they get there, they will stay there longer? These are two different and completely legitimate strategies. Actually, every ecosystem has representatives of those two strategies, one is called “r strategy” which prefers quantity over quality, while the K strategy puts more investment in a smaller number of offsprings.

You don’t see elephants calling mice immoral, do you?

Rank Checking is Useless/Wrong/Misleading

This one has been going around for years and keeps raising its ugly head every once in a while, particularly after Google forces another SaaS provider to give up part of its services because of either checking rankings themselves or buying ranking data from a third party provider. Then we get all the holier-than-thou folks, mounting their soap boxes and preaching fire and brimstone on SEOs who report rankings as the main or even only KPI. So firstly, again, just like with black vs. white hat, horses for courses. If you think your way of reporting to clients is the best, stick with it, preach it positively, as in “this is what I do and the clients like it” but stop telling other people what to do!

More importantly, vast majority of these arguments are based on a totally imaginary situation in which SEOs use rankings as their only or main KPI. In all of my 12 years in SEO, I have never seen any marketer worth their salt report “increase in rankings for 1000s of keywords”. As far back as 2002, I remember people were writing reports to clients which had a separate chapter for keywords which were defined as optimization targets, client’s site reached top rankings but no significant increase in traffic/conversions was achieved. Those keywords were then dropped from the marketing plan altogether.

It really isn’t a big leap to understand that ranking isn’t important if it doesn’t result in increased conversions in the end. I am not going to argue here why I do think reporting and monitoring rankings is important. The point is that if you need to make your argument against a straw man, you should probably rethink whether you have a good argument at all.

PageRank is Dead/it Doesn’t Matter

Another strawman argument. Show me a linkbuilder who today thinks that getting links based solely on toolbar PageRank is going to get them to rank and I will show you a guy who has probably not engaged in active SEO since 2002. And not a small amount of irony can be found in the fact that the same people who decry use of Pagerank, a closest thing to an actual Google ranking factor they can see, are freely using proprietary metrics created by other marketing companies and treating them as a perfectly reliable proxy for esoteric concepts which even Google finds hard to define, such as relevance and authority. Furthermore, all other things equal, show me the SEO who will take a pass on a PR6 link for the sake of a PR3 one.

Blogging on “How Does XXX Google Update Change Your SEO” – 5 Seconds After it is Announced

Matt hasn’t turned off his video camera to switch his t-shirt for the next Webmaster Central video and there are already dozens of blog posts discussing to the most intricate of details on how the new algorithm update/penalty/infrastructure change/random- monochromatic-animal will impact everyone’s daily routine and how we should all run for the hills.

Best-case scenario, these prolific writers only know the name of the update and they are already suggesting strategies on how to avoid being slapped or, even better, get out of the doghouse. This was painfully obvious in the early days of Panda, when people were writing their “experiences” on how to recover from the algorithm update even before the second update was rolled out, making any testimony of recovery, in the worst case, a lie or (given a massive benefit of the doubt) a misinterpretation of ranking changes (rank checking anyone).

Put down your feather and your ink bottle skippy, wait for the dust to settle and unless you have a human source who was involved in development or implementation of the algorithm, just sit tight and observe for the first week or two. After that you can write those observations and it will be considered a legitimate, even interesting reporting on the new algorithm but anything earlier than that will paint you as a clueless, pageview chaser, looking to ride the wave of interest with blog post that are often closed with “we will probably not even know what the XXX update is all about until we give it some time to get implemented”. Captain Obvious to the rescue.

Adwords Can Help Your Organic Rankings

This one is like a mythological Hydra – you cut one head off, two new one spring out. This question was answered so many times by so many people, both from within search engines and from the SEO community, that if you are addressing this question today, I am suspecting that you are actually trying to refrain from talking about something else and are using this topic as a smoke screen. Yes, I am looking at you Google Webmaster Central videos. Is that *really* the most interesting question you found on your pile? What, no one asked about <not provided> or about social signals or about role authorship plays on non-personalized rankings or on whether it flows through links or million other questions that are much more relevant, interesting and, more importantly, still unanswered?

Infographics/Directories/Commenting/Forum Profile Links Don’t Work

This is very similar to the blackhat/whitehat argument and it is usually supported by a statement that looks something like “what do you think that Google with hundreds of PhDs haven’t already discounted that in their algorithm?”. This is a typical “argument from incredulity” by a person who glorifies post graduate degrees as a litmus of intelligence and ingenuity. My claim is that these people have neither looked at backlink profiles of many sites in many competitive niches nor do they know a lot of people doing or having a PhD. They highly underrate former and overrate the latter.

A link is a link is a link and the only difference is between link profiles and percentages that each type of link occupies in a specific link profile. Funnily enough, the same people who claim that X type of links don’t work are the same people who will ask for link removal from totally legitimate, authoritative sources who gave them a totally organic, earned link. Go figure.

“But Matt/John/Moultano/anyone-with a brother in law who has once visited Mountain View” said…

Hello. Did you order “not provided will be maximum 10% of your referral data”? Or did you have “I would be surprised if there was a PR update this year”? How about “You should never use nofollow on-site links that you don’t want crawled. But it won’t hurt you. Unless something.”?

People keep thinking that people at Google sit around all day long, thinking how they can help SEOs do their job. How can you build your business based on advice given out by an entity who is actively trying to keep visitors from coming to your site? Can you imagine that happening in any other business environment? Can you imagine Nike marketing department going for a one day training session in Adidas HQ, to help them sell their sneakers better?

Repeat after me THEY ARE NOT YOUR FRIENDS. Use your own head. Even better, use your own experience. Test. Believe your own eyes.

We Didn’t Need Keyword Data Anyway

This is my absolute favourite. People who were as of yesterday basing their reporting, link building, landing page optimization, ranking reports, conversion rate optimization and about every other aspect of their online campaign on referring keywords, all of a sudden fell the need to tell the world how they never thought keywords were an important metric. That’s right buster, we are so much better off flying blind, doing iteration upon iteration of a derivation of data based on past trends, future trends, landing pages, third party data, etc.

It is ok every once in a while to say “crap, Google has really shafted us with this one, this is seriously going to affect the way I track progress”. Nothing bad will happen if you do. You will not lose face over it. Yes there were other metrics that were ALSO useful for different aspects of SEO but it is not as if when driving a car and your brakes die on you, you say “pfffftt stopping is for losers anyway, who wants to stop the car when you can enjoy the ride, I never really used those brakes in the past anyway. What really matters in the car is that your headlights are working”.

Does this mean we can’t do SEO anymore? Of course not. Adaptability is one of the top required traits of an SEO and we will adapt to this situation as we did to all the others in the past. But don’t bullshit yourself and everyone else that 100% <not provided> didn’t hurt you.

Responses to SEO is Dead Stories

It is crystal clear why the “SEO is dead” stories themselves deserve to die a slow and painful death. I am talking here about hordes of SEOs who rise to the occasion every freeking time some 5th rate journalist decides to poke the SEO industry through the cage bars and convince them, nay, prove to them how SEO is not only not dying but is alive and kicking and bigger than ever. And I am not innocent of this myself, I have also dignified this idiotic topic with a response (albeit a short one) but how many times can we rise to the same occasion and repeat the same points? What original angle can you give to this story after 16 years of responding to the same old claims? And if you can’t give an original angle, how in the world are you increasing our collective knowledge by re-warming and serving the same old dish that wasn’t very good first time it was served? Don’t you have rankings to check instead?

There is No #10.

But that’s what everyone does, writes a “Top 10 ways…” article, where they will force the examples until they get to a linkbaity number. No one wants to read a “Top 13…” or a “Top 23…” article. This needs to die too. Write what you have to say. Not what you think will get most traction. Marketing is makeup, but the face needs to be pretty before you apply it. Unless you like putting lipstick on pigs.

Branko Rihtman has been optimizing sites for search engines since 2001 for clients and own web properties in a variety of competitive niches. Over that time, Branko realized the importance of properly done research and experimentation and started publishing findings and experiments at SEO Scientist, with some additional updates at @neyne. He currently consults a number of international clients, helping them improve their organic traffic and conversions while questioning old approaches to SEO and trying some new ones.

The Next Domain Gold Rush: What You Need to Know

Posted by Dr-Pete

In late 2012 and early 2013, companies were allowed, for the first time, to apply for new TLDs (Top-Level Domains). There was a lot of press about big companies buying swaths of TLDs – for example, Google bought .google, .docs, .youtube, and many more. The rest of us heard the price tag – a cool $185,000 – and simply wrote this off as an interesting anecdote. What you may not realize is that there’s a phase two, and it’s relevant to everyone who owns a website (below: 544 new TLDs – cloud created with Tagxedo).

Phase 2: TLDs go live

You may have assumed that these TLDs would simply be bought up and tucked away for private use by mega-corporations, Saudi Princes, and Justin Bieber. The reality is that many of these TLDs are going to go live soon, and domains within them are going to be sold to the public, just like traditional TLDs (.com, .net, etc.). I talked to Steve Banfield, SVP Registrar Services at Demand Media (which owns eNom and Name.com), to get the scoop on what this process will mean for site owners.

Gold rush 2014

ICANN had more than 1,900 applications for TLDs, and of those Name.com currently lists 544 that will be available for sale in the near future. These domains cover a wide range of topics – here are just a few, to give you a flavor of what’s up for grabs:

- .app

- .attorney

- .blog

- .boston

- .flowers

- .marketing

- .porn

- .realtor

- .store

- .web

- .wedding

- .wtf

This is an unprecedented explosion in available domain names, and you can expect a gold rush mentality as companies scoop up domains to protect trademarks and chase new opportunities and as individuals register a wide variety of vanity domains. So, when do these domains go on sale, and how much will they cost? As Steve explained to me, this gets a bit tricky…

“Sunrise” & Pre-registration

Understandably, ICANN is reluctant to simply release hundreds of TLDs into the wild all at once and upset the ecosystem. As the TLDs have been granted, they’ve been gradually delegated to the global DNS and are coming online in batches. As each TLD becomes available, it has to undergo a 60-day “sunrise” period. This period allows trademark holder to register claims and potentially lock down protected words. For example, Dell may want to lock down dell.computer or Amazon.com may grab amazon.book. These domains must still be registered (and paid for), but trademark holders get first dibs across any new TLD. Trademark disputes are a separate, legal issue (and beyond the scope of this post).

Some registrars will allow pre-registration during or immediately following the sunrise period. While you can’t technically register a domain without a trademark claim during the 60 day sunrise, they’ll essentially add you to a waiting list. This gets complicated, as multiple registrars could all have people on their waiting list for the same domain, so there are no guarantees. Some registrars are also charging premium prices for pre-registration, and those premiums could carry into your renewals, so read the fine print carefully.

Facts and figures

Once sunrise and pre-registration end, general availability begins. You may be wondering – when is that, exactly? The short answer is: it’s complicated. I’ll attempt to answer the big questions, with Steve’s help:

When do the new domains go on sale?

The first group of domains began their sunrise period on November 26, 2013, and it ends on January 24, 2014. After that, additional domains will come into play in small groups, throughout the year. To find out about any particular domain/TLD, your best bet is to use a service like Name.com’s TLD watch-list, which sends status notifications about specific domains you’re interested in. Your own registrar of choice may have a similar service. The specifics of any given TLD will vary.

How much will the new domains cost?

Unfortunately, it depends. Each TLD can be priced differently, and even within a TLD, some domains may go for a premium rate. A few TLDs will probably be auction-based and not fixed-price. Use a watch-list tool or investigate your domains of choice individually.

What kind of a land grab can we expect?

With over 500 TLDs in play over the course of months, it’s nearly impossible to say. Some domains, like .attorney, will clearly be competitive in local markets, and you can expect a gold rush mentality. Other domains, like .guru may be popular for vanity URLs. Regional and niche domains, like .okinawa or .rodeo are going to have a smaller audience. Then there are wildcards, like .ninja, that are really anyone’s guess.

SEO implications

Naturally, as a Moz reader, you may be wondering what weight the new TLDs will have with search engines. Will a domain like seattle.attorney have the same ranking benefit as a more traditional domain like seattleattorney.com? Google’s Matt Cutts has stated that the new TLDs won’t have an advantage over existing domains, but was unclear on whether keywords in the new domain extensions will act as a ranking signal. I strongly suspect they will play this by ear, until they know how each of the new TLDs is being used. In my opinion, exact-match domains are no longer as powerful without other signals to back them up, and it’s likely Google may lower the volume on some of the new TLDs or treat them more like sub-domains in terms of ranking power. In other words, they’ll probably have some value, but don’t expect miracles.

There may be indirect SEO benefits. For example, if you own seattle.attorney, it’s more likely people will link to you with the phrase “Seattle attorney”, and since that’s now your brand/domain, it’s more likely to look natural (because it’s more likely to be natural). A well-matched name may also be more memorable, in some cases, although it may take people some time to get used to the new TLDs. To quote Steve directly:

What will matter is the memory of the end user and branding. Which is better: hilton.com or hilton.hotel, chevrolet.com or chevrolet.cars, coors.com or coors.beer? Today, it’s easy to say the .com is “better” for brand recall, but over time we’ll have to see which works better for brand marketing.

My conservative opinion is this – don’t scoop up dozens of domains just in the hopes of magically ranking. Register domains that match your business objectives or that you want to protect – either because of your own trademarks or for future use. If you hit the domain game late and have a .com that you hate (this-is-all-they-had-left.com), it might be a good time to consider your options for something more memorable.

Todd Malicoat wrote an excellent post last year on choosing an exact-match domain, and I think many of his tips are relevant to the new TLDs and any domain purchase. Ultimately, some people will use the new TLDs creatively and powerfully, and others will use them poorly. There’s opportunity here, but it’s going to take planning, brand awareness, and ultimately, smart marketing.

Sign up for The Moz Top 10, a semimonthly mailer updating you on the top ten hottest pieces of SEO news, tips, and rad links uncovered by the Moz team. Think of it as your exclusive digest of stuff you don’t have time to hunt down but want to read!

SearchCap: The Day In Search, December 11, 2013

Below is what happened in search today, as reported on Search Engine Land and from other places across the web. From Search Engine Land: Analyst Gives Both Google Now And Siri A “C+” Piper Jaffray financial analyst Gene Munster performed his now annual comparison of Google Voice Search/Google Now…

Please visit Search Engine Land for the full article.

Mobile Google News Updated

Google announced on the Google News blog that the mobile version of Google News is now updated for Android and iOS devices.

The new look aims at giving users the ability to scan the news quicker and with more options…

Google Maps Wants You To Create Street View Images

Google announced that they want you all to create their street view photos for them using photo spheres…

Facebook’s News Feed Gets It’s Own Google Panda Algorithm

A week or so ago, Facebook announced changes to the news feed algorithm…

Google+ To Power Google +Post Ads For Display Network

Google announced on Google+ yesterday a new form of display ad powered through Google+ named +Post Ads.

In short, +Post ads allow a brand to take their public Google+ content, photos, videos or Hangouts…

Google’s Matt Cutts: Disavow Links Aggressively

Google’s Matt Cutts released another video this one tries to answer how to remove a penalty for bad link building over a period of time…

Google On How To Make Better Mobile Web Sites

Maile Ohye, a Google developer lead who has worked on mobile and webmaster topics for a long time, shared on Google+ that she was fed up.

Well…

Google Panda Impacting Your Mega Site? Use Sitemaps To Recover?

A WebmasterWorld thread has discussion around how to determine which sections or categories of your web site are impacted by Google’s Panda algorithm.

Panda has not gone away and sites are still suffering from not ranking in Google after the Panda alg…

Google’s Matt Cutts Agrees, Guest Blogging Is Getting Out Of Hand

So Matt Cutts released another video yesterday and this is at least the fourth video on the topic of guest blogging.

The deal is, Google is saying Guest Blogging as a whole is getting spammier by the day. As good things get abused, over time, those g…

Google Penalty Notifications Sent To Anglo Rank Link Buyers

As you know, Google’s Matt Cutts publicly outed that Google went after Anglo Rank’s link network and that the penalties and notifications will go out in a few days…

Matt Cutts: Google Penalties Get More Severe for Repeat Offenders

A website that gets caught violating Google’s webmaster guidelines for the first time won’t be treated as harshly as those that have been penalized multiple times. Also: how the disavow tool can bring a site with bad backlinks back from the dead.