How to optimise your images for SEO

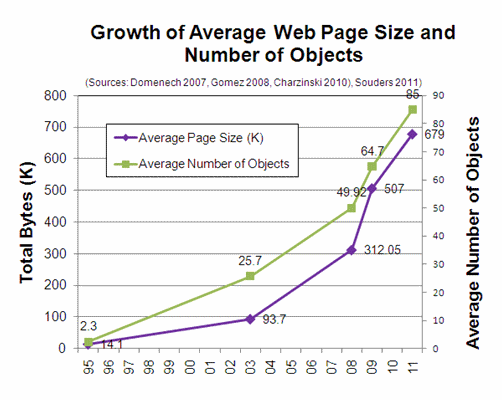

The following graph shows this rapid increase:

Some facts about images:

- 100bn images are captured and made available online each year.

- 750m camera equipped mobile phones are sold each year.

- 100m digital cameras are sold each year.

Images are important to search. Increasingly important. Don’t just take our word for it though.

R.J Pittman, Google Director of Product Management, Feb 2009:

Image search makes up about 5.7% of all Google Searches and 5% of all search is image related.

However, very few websites are optimised to take advantage. A recent study we conducted on the top 20 websites in a particular industry (thought to have fairly advanced SEO), highlighted that their optimisation in this area was poor, at best.

So what can be done to capitalise on this free traffic?

Cookieless domain

Ideally images should be hosted under a cookieless domain. A cookie is a small piece of data sent from a website and stored in a user’s web browser while the user is browsing that website.

Cookies are usually used to maintain session state, etc. Despite being relatively small, it is unnecessary for them to be sent with every image request as it needlessly slows down the user’s experience.

This is particularly important for mobile users. For example, if your website is www.site.com then you should consider www.sitecontent.com or images.sitecontent.com or some other variation as long as the domain does not set cookies.

Of course you need to make sure you own the domain. You may also need to register the domain with the various search engine site master tools.

Image filename

Images should have meaningful filenames. Using an ID, e.g. SKU, is simply not good enough and does nothing to inform search engines about the contents of the image.

Filenames, like the following, are common examples of what should be avoided:

- 112354_main.jpg

- Method-01-2011-med.jpg

- e9b8fb52-6c02-11e0-b36e-00144feab49a.img

Images should have meaningful filenames without overly long paths, e.g.

images.site.com/department/brand/sportswear-fashion-jacket.jpg

Don’t forget to include location information, if that is relevant too, e.g.

images.site.com/hotelname-street-london-uk.jpg

If a website is translated into multiple languages, then image filenames could be too. However, this can prove a challenge for some systems and will often require images to be duplicated, unless a dynamic imaging system is used.

Ideally you want to be able to change the image filename, without having to re-upload the image. However, make sure that your image URLs cannot be tampered with by malicious people, e.g.

images.site.com/short-black-dress-1226354.jpg

Make sure short-black-dress cannot be replaced and the image is still served correctly

images.site.com/insert-brand-damaging-text-1226354.jpg

Sitemaps

Google has extended its Sitemap capabilities to include support for images. You are now able to provide a caption, title, geo location and license for each image.

It makes sense to use this new capability although Google does not guarantee that it will include your images. It is important to make sure that you are not including thumbnail images and main product images.

Include your main product images rather than thumbnails.

Googlebot

Google uses a special crawler to crawl the web for images. To prevent Googlebot-image from indexing small images (e.g. search result thumbnail images), as opposed to the large images you want them to index, you could sniff the UserAgent string and serve it the large image URL.

That way Google won’t accidentally include any small images that are less valuable.

Site speed

In April 2010, Google announced that it was adding site speed as a signal in their search ranking algorithms. Amazon has released research showing that every 100 ms increase in page load time decreased sales by 1%. These are both compelling reasons to improve site speed.

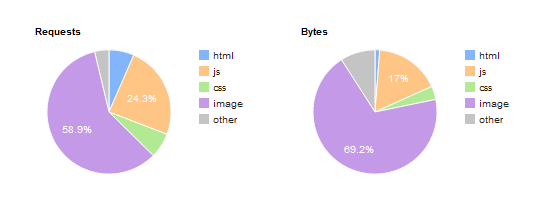

As images typically represent the vast majority of page weight they can have a significant impact on site speed.

This example has been taken from an online shoe store and it’s by no means the worst case!

There are several techniques to mitigate this:

- File size reduction, i.e. compression.

- Intelligent caching.

- Delivery acceleration.

It is not good enough to implement one or two of these techniques. All should be implemented!

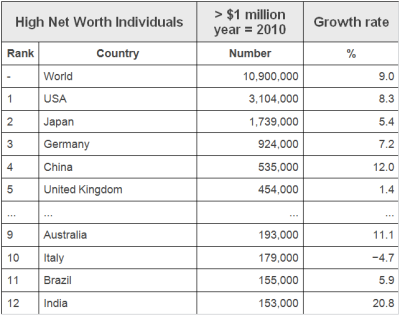

International

Websites with international customers are often providing a poor user experience because they are not optimised to cope with the distance.

The distance problem comes down to latency:

- 40 to 80 ms from Europe to UK.

- 80 to 180 ms from USA to UK.

- 250 to 300 ms from Japan to UK.

- 300 to 350 ms from Australia to UK.

- 500 to 600 ms from China to UK.

As stated above, Amazon research shows that every 100 ms increase in page load time decreases sales by 1%.

We tested the loading time of a designer shoe website from London and Sao Paulo. It took eight seconds for the site to load from London and just over 20 seconds from Sao Paulo. The situation is even worse with China.

With appropriate delivery acceleration (edge caching) in place it should not have taken much longer.

If you have any doubt, as to the purchasing power of these countries, see the table below. At its current growth rate, India would easily have more High Net Worth Individuals than the UK by 2018.

Endless aisle

Prior to the internet, retailers were restricted by the location and floor space of their shops. This not only restricted who they could sell to, but which products they could sell.

With the advent of the internet, these physical restrictions have largely been removed. Retailers may stock the top products in their stores, but are able to offer the long tail of products online.

Retailers are also able to offer categories of products that are complimentary to their brand that they wouldn’t ordinarily sell in their physical stores, or wish to stock in their warehouses.

Increasingly people are using search engines to find products rather than visiting sites directly.

Long tail products increase your content and the likelihood that people using search engines will find your site.

Discontinued products

Products (and their images) that have been discontinued should not be removed from websites as there will likely be residual links and hence traffic to them.

Visitors should be shown the discontinued product and offered one or more of the following:

- The replacement product.

- Products from the same category.

- Products from the same brand.

Multichannel

Using a Dynamic Imaging solution you are able to optimise images (dimensions and/or file size) for different device models, e.g. PCs, smartphones, tablets, kiosks, ePoS, smart TVs, etc.

For example you may serve a picture at 85% quality (read compression) for a PC, but serve it at 60% quality for smartphones. Whilst 60% may be unacceptable on a PC, smartphones user will not notice and will appreciate the quicker download.

Search engines such as Google are now crawling websites with different mobile user agents to see if they find different content or mobile optimised content.

Social media

The Facebook Like button is incredibly powerful. See below for an example of how it can be added

In this example the social media buttons only appear when the user hovers their mouse over the thumbnail in the search results.

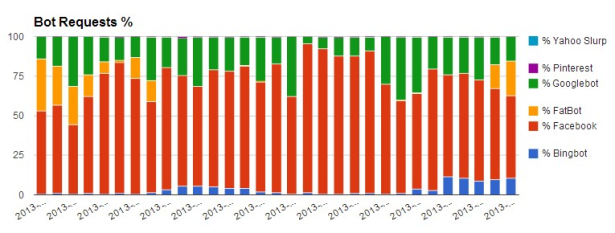

When a user clicks a Facebook Like button, Facebook downloads a copy of the image. The following graph shows for August the % of image Bot requests that Facebook accounts for with a website that uses Facebook Like buttons with their product images.

As you can see it is a very high percentage!

Pinterest is a virtual pinboard which allows users to organize and share things they find on the web. Users can browse pinboards created by other people to discover new things and get inspiration from people who share their interests.

Pinterest’s Pin It Button can be easily added to your website.

As with Facebook and Pinterest you can add a Twitter button so users can tweet about the products they like.

Google SERP images

Google increasingly displays images in its search results. For example:

When users click on an image Google displays a page such as the following:

The user’s web browser makes a request for the original image with Google set as the referrer.

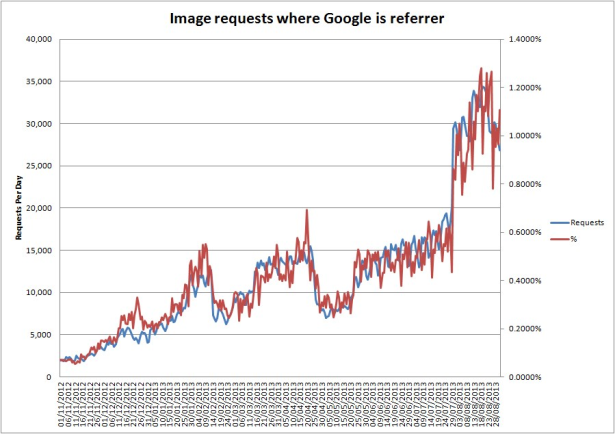

The following graph shows the number of image requests where Google is the referrer.

Over 1% of the image requests on a daily basis are now referrals from Google and this percentage is increasing!

This highlights the importance of images as part of an SEO strategy.

Science of Conversion Rate Optimization

In a previous post, Thijs made quite a fuss about how many conversion-testers do not know their business. He stated that both the execution as the interpretation of testing showed serious flaws. His major point was that the way we deal with this conversion-testing is not scientific. At all. Time to define scientific. Time to…

Science of Conversion Rate Optimization is a post by Marieke van de Rakt on Yoast – The Art & Science of Website Optimization.

A good WordPress blog needs good hosting, you don’t want your blog to be slow, or, even worse, down, do you? Check out my thoughts on WordPress hosting!

When Keyword (not provided) is 100 Percent of Organic Referrals, What Should Marketers Do? – Whiteboard Tuesday

Posted by randfish

For nearly two years, marketers have been frustrated by a steadily increasing percentage of keywords (not provided). Recent changes by Google have sent those numbers soaring. The site Not Provided Count now reports an average of nearly 74% of keywords not provided, and speculation abounds that it won’t be long before 100% of keywords are masked. Without that referral data, our tasks as Internet marketers become far more difficult—but not impossible.

In this special Whiteboard Tuesday, Rand covers what marketers can do to make up for this drastic change, finding data from other sources to stay on top of their SEO efforts.

Whiteboard Friday – Now that Keyword (not provided) is 100% of Referrals, What Should Marketers Do_1

For reference, here’s a still image of today’s whiteboard!

Video Transcription

Howdy, Moz fans, and welcome to another edition of Whiteboard Friday! Today I’m going to talk about this extremely troublesome and worrisome problem that Google has expanded “keyword (not provided)” potentially to 100% of all organic referrals. This isn’t necessarily that they’ve flipped the entire switch, and everyone’s going to see it this week, but certainly over the next several months, it’s been suggested, we may receive no keyword data at all in referrals from Google. Very troubling and concerning, obviously, if you’re a web marketer.

I think it should be very troubling and concerning if you’re a web user as well, because marketers don’t use this data to do evil things or invade people’s privacy. Marketers use this data to make the web a better place. The agreement that marketers have always had—that website creators have always had—with search engines, since their inception was, “sure, we’ll let you crawl our sites, you provide us with the keyword data so that we can improve the Internet together. I think this is Google abusing their monopolistic position in the United States. Unfortunately, I don’t really see a way out of it. I don’t think marketers can make a strong enough case politically or to consumer groups to get this removed. Maybe the EU can eventually.

But in any case, let’s deal with the reality that we’re faced with today, which is that keyword not provided may be 100% of your referrals, and so keyword data is essentially gone. We don’t know when Google sends a visit—Bing, to their credit, and to Microsoft’s credit, enduringly has kept that data accessible—but we don’t know when Google sends a visit to our sites and pages, what that person searched for. Previously, we could do some sampling—now we can’t even do that.

There are some big tasks that we use that data for, and so to start with, I want to try and identify the uses for keyword referral data, at least the very important ones as I perceive them—there are certainly many more.

Number one: finding opportunities to improve a page’s performance or its ranking. If you see that a page of yours is receiving a lot of search traffic, or that a keyword is sending a lot of search traffic (or even a little bit of search traffic), but the page is not ranking very well, you know that by improving that page’s ranking you have an opportunity to earn a lot more search traffic. That’s a very valuable thing as a marketer. You can also see if a search query is sending traffic to a page, but that page has a high bounce rate for that traffic, low pages-per-visit, low conversion rate, you know, “hey, I’m not doing a good job serving the visitor; I need to improve how the page addresses that.” That’s one of the key things we use keyword referral data for.

Secondarily: connecting rank improvement efforts—things that we do in the SEO world to move up our rankings—to the traffic growth that we receive from them. This is very important for consultants and for agencies, and for in-house SEOs as well, to show our value to our managers, and our clients—it’s really, really tough to have this data taken away.

C: Understanding how your searchers perceive your brand and your content. When we look down the list of phrases that sent us traffic, we could see things like “oh, this is how people are thinking about my brand, or thinking about this product I launched, or thinking about this content that I’ve put out.” Really challenging to do that nowadays.

And D: uncovering keyword opportunities. We could certainly see, “this is sending a small amount of traffic, this is doing some long-tail stuff, hey—let’s turn this into a broader piece of content. Let’s try and optimize for some of those keyword phrases that we’re barely ranking on.” Or, we have a page that’s not really addressing that keyword phrase that we’re ranking on. We can address that. We can improve that.

So I’m going to try and tackle some relatively simplistic ways, and I’m not going to walk through all the details you would need to do this, but I think many folks in the SEO and marketing sphere will address these over the weeks and months to come.

Starting with A. How do I find opportunities to improve a page’s ranking or its performance with users when I can’t see keyword referral data? How do I know which page people are coming to? Thankfully, we can use the connection—the intersection of a few different sources of data. Pages that are receiving search visits is a big one, and this is going to be used throughout—instead of looking at keyword-level data, we’re going to be looking at page-level data. Which pages received referral visits from Google Search? Thankfully, that’s still data that we do get, and that’ll likely stay with us, because we can always see a referral source, and we know which pages are loaded. So, even if Google Analytics were to remove that, I think a third-party analytics provider would step in.

Pages receiving search visits plus rank-tracking data can get us a little close to this, because we can essentially say, “hey, we know this page is ranking well for these five or ten keywords that we have some reasonable expectation that they have keyword search volume. They’re receiving search visits, and yet they’re not performing well, or they’re not ranking particularly well, so improving them should be able to drive up our search traffic, improving their performance with users should be able to drive up our conversion rate optimization.

Optionally, we could also add in things like Google Webmaster Tools or AdWords data; AdWords data being used on they keyword side to fill in for, “hey, what’s the volume that a keyword is getting,” and Google Webmaster Tools data to be able to see a list of some keywords that maybe are sending us traffic. Dr. Pete wrote a good post recently about the relative accuracy of Google Webmaster Tools, and while unfortunately it’s not as good as any of the other methods, it’s still not awful, and so that data is potentially usable.

This will give us a list of pages that get search visits, or are targeting important search terms, that rank, and that have the potential to improve. So this gets us to the answer to this question. This used to be really simple to get at, now it’s more difficult, but still possible.

B. Connecting our SEO efforts to traffic growth from search. I know this is going to be tremendously hard, and this is probably one of the biggest tolls that this change is taking on SEO folks. Because as SEOs, as marketers, we’ve shown our value by saying, “look, we’re driving up search visits, some of it’s branded, some of it’s unbranded, some of it’s not provided—but you get a rough sense of this. And you really need that percentage: “What percent of the traffic is actually you going and getting us new visitors that never would have found us, versus branded stuff that’s just sort of rising on its own.” Maybe it’s rising because of efforts that marketers are making: investments in content, and in social media, and in email and all these other wonderful things, but it’s hard to know— it’s hard to directly map that.

So here’s one of the ways. Optionally, we can use AdWords to bid on branded terms and phrases. When we do that, you might want to have a relatively broad match on your branded terms and phrases so that you can see keyword volume that is branded from impression data. That gives you a sense of, “what’s the trajectory, here?” If we’re seeing it grow, we can identify “oh, that’s not us driving a bunch of new non-branded new keyword terms and phrases; that’s our brand search increasing.” So we can sort of discount that, or apply that in our reporting effectively. If we see, on the other hand, that it’s staying flat, but that search traffic overall is going up and to the right, then we know that’s unbranded.

Optionally, if we don’t want to be bidding and spending a lot of money with Google AdWords and trying to keep our impression counts high, we can use things like Google Insights or even downloading AdWords volume data estimates month-over-month to be able to track those sorts of things.

Certainly one of the things I would recommend doing even prior to this change is tracking rankings on buckets. Buckets of head terms, versus chunky middle, versus long-tail; so phrases that are getting lots of search volume, a good amount of search volume, and very little search volume. You want to have different buckets of those, so you can see, “oh hey, my rankings are generally improving in this bucket, or that bucket.” Same with branded vs. non-branded; you want to be able to identify and track those separately. Then, compare against visits that you’re seeing to pages that are ranking for those terms. We need to look at the pages that are receiving search traffic from those different buckets.

Again, much more challenging to do these days. But, any time we see the complexity of our practice is increasing, we also have an opportunity, because it means that those of us who are savvy, sophisticated, able to track this data, are far more useful and employable and important. Those organizations that use great marketers are going to receive outsized benefits from doing so.

C: How do I understand and analyze how searchers perceive my brand? What are they searching for that’s leading them to my site? How are they searching for terms related to my brand? Again, we can bid on AdWords terms, like I talked about. You can use keyword suggestion sources like Google Suggest, Ubersuggest, certainly AdWords’s own volume data, SEMRush, etc. to see the keyword expansions related to your brand or the content that’s very closely tied to your brand. And internal site search data. You’ve got a search box up in the top-right hand corner, people are typing in stuff, and you want to see what that “XYZ” is that they’re typing in. Those can help as well, and can provide you some opportunities that lead to D.

D: How do I uncover new keyword opportunities to target? Of course, there’s the classic methodology that we’ve all employed, which is keyword research, but usually we compare that to the terms that are already sending us traffic, and we go look and say, “oh, okay, we’re doing fine for these—we don’t need to worry.” Now, we need to take keyword research tools and add some form of rank-tracking data. That could be from Google Webmaster Tools despite its mediocrity in terms of accuracy. We can use manual rank data—we can search for it ourselves—or we can use automated data.

One of the criticisms for all rank-tracking data is always, “but there’s lots of personalization and geographic localization—these kinds of things that are biasing searches—how do I see all of that?” And the answer is, well, you can’t really. Personalization is going to fluctuate things. It may be sort of included in the Google Webmaster Tools data, but as Dr. Pete showed in his post, it looks a little funky right now.

For localization, you can add the geo in the string to be able to see where you rank in different geographies if you want to track those. That’s something you’ll be able to do in Moz Analytics and probably many of the other keyword tracking tools out there, too.

Optionally—and this is expensive, and I hate to say this is Google being evil, but this is probably what Google wants you to do when they give you “(not provided)”—which is run AdWords campaigns targeting those keywords, so that you can see new expansion opportunities. Areas where, “oh hey, we bid on this, it sent impressions, it sent some traffic, it looks like it’s worthwhile, we’re not ranking for it organically,” and again, you can see that through your rank-tracking data or through pages receiving visits from search, and then targeting those terms.

So, a lot of this data, and a lot of these opportunities are retrievable—they’re just a lot harder. I will say—this is somewhat self-promotional, but I think one of Moz’s missions and obligations as a company to the search marketing world is to try and help replace, repair, and make these processes easier. So, you can guess that over the next 6-12 months that’s going to be a big part of our roadmap: trying to help you folks—and all marketers—get to this data.

For now, these methodologies can and should be helpful to you, and I expect to see lots of great discussion about other ways to go about this in the comments.

Thanks, everyone—take care.

Sign up for The Moz Top 10, a semimonthly mailer updating you on the top ten hottest pieces of SEO news, tips, and rad links uncovered by the Moz team. Think of it as your exclusive digest of stuff you don’t have time to hunt down but want to read!

SearchCap: The Day In Search, September 23, 2013

Below is what happened in search today, as reported on Search Engine Land and from other places across the Web. From Search Engine Land: Bing Ads Gets Extended Validation SSL Ceritificates & Two-Step Verification Process Bing Ads announced today that it has tightened account security measures…

Please visit Search Engine Land for the full article.

Bing Ads Gets Extended Validation SSL Ceritificates & Two-Step Verification Process

Bing Ads announced today that it has tightened account security measures by adopting extended validation SSL certificates for its website, and added a two-step verification process for users accessing Bing Ads via a Microsoft account. According to the …

AOL Climbs into 2nd Place in Online Video Content Ranking with 71 Million Viewers

In August, 188.5 million Americans watched 46.7 billion online content videos, while the number of video ad views totaled 22.8 billion, according to comScore. AOL has climbed into second place with 55.9 percent more viewers than it had a year ago.

NY Attorney General’s Fake Reviews Sting Exposes Bad Client Screening Practices by SEOs

NEW YORK — Attorney General Eric T. Schneiderman today announced that 19 companies had agreed to cease their practice of writing fake online reviews for businesses and to pay more than $350,000 in penalties. “Operation Clean Turf,” a year-long undercover investigation into the reputation management industry, the manipulation of consumer-review websites, and the practice of […]

NEW YORK — Attorney General Eric T. Schneiderman today announced that 19 companies had agreed to cease their practice of writing fake online reviews for businesses and to pay more than $350,000 in penalties. “Operation Clean Turf,” a year-long undercover investigation into the reputation management industry, the manipulation of consumer-review websites, and the practice of […]

The post NY Attorney General’s Fake Reviews Sting Exposes Bad Client Screening Practices by SEOs appeared first on Local SEO Guide.

Google Acquires Unisys Patents Including Java API Patent

Selective Multiple Protocol Transport And Dynamic Format Conversion In A Multi-User Network (US Patent 5848415) A content server using an object database supports a network of multiple User clients. The database is loaded with virtual objects which con…

Fake Online Reviews Cost 19 Companies $350,000

A New York crackdown targeted companies who purchased and created fake online business reviews. A total of seven companies offering “reputation enhancement” services were caught in the year-long investigation, along with their clients.

SEO Companies Fined Over Fake Reviews

Several SEO companies have agreed to stop publishing fake online reviews, and will also pay penalties ranging from $2,500 to almost $100,000 as part of settlement announced today with the New York attorney general’s office. The AG’s year-long investigation ultimately caught 19 companies…

Please visit Search Engine Land for the full article.

New Google Bar, Logo Begins Rolling Out

The Google bar, which appears above the search results and across many of their properties and apps, has a new look. Google has also added a new app grid and updated the color palette and letter shapes of the Google logo as part of the new design.

Time to say a last goodbye to organic keyword data?

To illustrate the point, here’s our data from August, showing (not provided) as a percentage of all Google organic traffic:

And the same chart for September, so far:

And for today:

Other sites are reporting similar patterns. The Mirror’s Malcolm Coles reports that of The Mirror’s desktop traffic from search, 82.5% is encrypted today.

Koozai’s Mike Essex reports that his site’s (not provided) traffic is 93% of organic, while the (not provided) count, which tracks a number of sites, shows a big spike over the past couple of weeks, though this doesn’t include today’s data:

Why does this matter?

For us, we’ve kind of given up on making sense of organic keywords, simply because we can see so few of them.

Here are our top organic Google searches for September. As you can see, the numbers are fairly insignificant. All it tells us is that we’re doing well for ‘Bill Gates quotes’ thanks to a seven year old article.

Even this workaround for calculating branded search traffic will soon become very difficult, as we have little or no organic data to base it on.

Of course, search data isn’t encrypted for advertisers using Google search ads, so if you’re worried about the NSA spying on you, don’t go clicking on those ads…

Check your (not provided) traffic

These handy custom Google Analytics links from Dan Barker will help you to quickly check the state of your (not provided) traffic.

- This dashboard shows Not Provided as a percentage of Google Organic traffic in pie chart form, as used above. Click here to add to your GA profile.

- This custom report shows the same, with keyword data, as in the screenshot above. Click here to add to your profile.

Are you seeing a jump in encrypted organic search data on your site? Let us know in the comments…

Goodbye, Keyword Data: Google Moves Entirely to Secure Search

Nearly two years after making one of the biggest changes to secure search that resulted in a steady rise in “(not provided)” data, Google has made all searches encrypted using HTTPS. This means no more keyword data will be passed to site owners.

Post-PRISM, Google Confirms Quietly Moving To Make All Searches Secure, Except For Ad Clicks

In the past month, Google quietly made a change aimed at encrypting all search activity — except for clicks on ads. Google says this has done to provide “extra protection” for searchers, and the company may be aiming to block NSA spying activity. Or possibly, it’s a move to…

Please visit Search Engine Land for the full article.

North America: Internet Statistics Compendium

The North American Internet Statistics Compendium is a comprehensive collection of the most recent USA and Canadian statistics and market data publicly available on online marketing, e-commerce, the internet and related digital media.

It is part of Econsultancy’s Internet Statistics Compendium package and is updated monthly.

The report has been collated from information available to the public, which we have aggregated together in one place to help you quickly find the internet statistics you need, to help make your pitch or internal report up to date.

There are all sorts of internet statistics which you can slot into your next presentation, report or client pitch.

Areas covered in Econsultancy’s statistics documents include:

- Affiliate Marketing

- Internet Advertising

- Web Analytics

- Social Media

- Search Marketing

- Mobile

- Email Marketing

- E-commerce

- Customer Experience

-

Technology Adoption

(Including: Video market size and growth trends, Video On Demand/Catch Up TV, User generated video and video sharing, Audio market size and growth trends, Downloading music, Online radio, RSS, Site performance, Site speed and availability, User technology, Desktop browsers, Mobile browsers, Pop-up blockers, Operating systems, Flash penetration) -

Demographics

(Including: Global reach/penetration of interactive services, Media consumption figures – internet and other media, Broadband adoption, Broadband’s effect on e-commerce, Usage patterns by location, Age and gender usage variations, What users are doing and looking at online, Instant messaging (IM), Voice over Internet Protocol (VoIP), Gaming, Podcasts)

A free sample document is available for download

Google Redirects All Traffic To HTTPS, Driving [not provided] To 100%.

My buddy Ryan Jones recently tipped me off that Google seems to now be redirecting all traffic to the HTTPs version of their site. What does this mean?

read more

The PPC Experiment You Never Dare Run

A question that PPC account managers frequently have to deal with is, “Why are we paying for this traffic? Aren’t we going to get that traffic anyway?” It’s a fair question, even if it is completely annoying to hear for the twentieth time by the twentieth new accounting…

Please visit Search Engine Land for the full article.

Inbound Marketing: How to Give Your Business an Edge

Could an Inbound Marketing Strategy be a solution for small business owners in multiple roles?

Post from Jayson DeMers on State of Digital

Inbound Marketing: How to Give Your Business an Edge

Choose the SMX East Pass that Fits Your Needs, Budget and Schedule – Starts Next Tuesday!

Search Engine Land’s – SMX East conference kicks off October 1 in New York City. With over 50 educational sessions and keynotes, many networking activities and presentations from leading solutions providers, you’ll get the tactics and tools you need to exceed your marketing and sales goals….

Please visit Search Engine Land for the full article.

UK: Internet Statistics Compendium

The UK Internet Statistics Compendium is a comprehensive collection of the most recent worldwide statistics and market data publicly available on online marketing, e-commerce, the internet and related digital media.

It is part of Econsultancy’s Interne…