Google AdSense Back To Square Arrows?

When Google introduced the arrows to the AdSense ads, they came with a colored square background button with an arrow overlaid on the square. Then a year ago…

Did The Authorship Reduction In Google Begin?

In October, Matt Cutts of Google at PubCon announced there would likely be a 15% reduction in authorship being displayed in the Google search results. Since then, I haven’t seen many – I’ve seen some – complaints about authorship going away…

Breaking up responsive design

Over the last couple of weeks I have been dealing with the fine art of CSS. Although that is not my daily business anymore – because I lead the website review team here at Yoast – I really enjoyed mastering SCSS and using that for an actual design. During this field trip, I encountered several…

This post first appeared on Yoast. Whoopity Doo!

6 Reasons Why BuzzFeed Destroys Your Content Strategy

Sure, it’s easy to disparage the content BuzzFeed spews out, but BuzzFeed is doing something that most brands completely fail to do – executing an effective content strategy. BuzzFeed’s content strategy is far, far better than yours. Here’s why.

Did Google Help or Hurt SEO in 2013?

Come, join us for a look back at some of the major SEO issues we faced heading into and during 2013, as well as what Google did for and to the SEO community. Then we’ll gaze into our crystal ball to see what’s likely to come in 2014, and why.

A Guide to all the Google AdWords PPC Settings

An in-depth guide into the various settings within Google AdWords, what they are and how to use them.

Post from Samantha Noble on State of Digital

A Guide to all the Google AdWords PPC Settings

I Am an Entity: Hacking the Knowledge Graph

Posted by Andrew_Isidoro

This post was originally in YouMoz, and was promoted to the main blog because it provides great value and interest to our community. The author’s views are entirely his or her own and may not reflect the views of Moz, Inc.

For a long time Google has algorithmically led users towards web pages based on search strings, yet over the past few years, we’ve seen many changes which are leading to a more data-driven model of semantic search.

In 2010 Google hit a milestone with its acquisition of Metaweb and its semantic database now known as Freebase. This database helps to make up the Knowledge Graph; an archive of over 570 million of the most searched-for people, places and things (entities), including around 18 billion cross-references. A truly impressive demonstration of what a semantic search engine with structured data can bring to the everyday user.

What has changed?

The surge of Knowledge Graph entries picked up by Dr Pete a few weeks ago indicates a huge change in the algorithm. Google has been attempting to establish a deep associative context around the entities to try and understand the query rather than just regurgitate what it believes is the closest result for some time, but this has been focused on a very tight dataset reserved for high profile people, places and things.

It seems that has changed.

Over the past few weeks, while looking into how the Knowledge Graph pulls data for certain sources, I have made a few general observations and have been tracking what, if any, impact certain practices have on the display of information panels.

If I’m being brutally honest, this experiment was to scratch a personal “itch.” I was interested in the constructs of the Knowledge Graph over anything else, which is why I was so surprised that a few weeks ago I began to see this:

It seems that anyone now wishing to find out “Andrew Isidoro’s Age” could now be greeted with not only my age but also my date of birth in an information panel. After a few well-planned boasts to my girlfriend about my new found fame (all of which were dismissed as “slightly sad and geeky”), I began to probe further and found that this was by no means the only piece of information that Google could supply users about me.

It also displayed data such as my place of birth and my Job. It could even answer natural language queries and connect me to other entities like in queries such as: “Where did Andrew Isidoro go to school?”

and somewhat creepily, “Who are Andrew Isidoro’s parents?“.

Many of you may now be a little scared about your own personal privacy, but I have a confession to make. Though I am by no means a celebrity, I do have a Freebase profile. The information that I have inputted into this is now available for all to see as a part of Google’s search product.

I’ve already written about the implications of privacy so I’ll gloss over the ethics for a moment and get right into the mechanics.

How are entities born?

Disclaimer: I’m a long-time user of and contributor to Freebase, I’ve written about its potential uses in search many times and the below represents my opinion based on externally-visible interactions with Freebase and other Google products.

After taking some time to study the subject, there seems to be a structure around how entities are initiated within the Knowledge Graph:

Affinity

As anyone who works with external data will tell you, one of the most challenging tasks is identifying the levels of trust within a data-set. Google is not different here; to be able to offer a definitive answer to a query, they must be confident of its reliability.

After a few experiments with Freebase data, it seems clear that Google are pretty damn sure the string “Andrew Isidoro” is me. There are a few potential reasons for this:

- Provenance

To take a definition from W3C:

“Provenance is information about entities, activities, and people involved in producing a piece of data or thing, which can be used to form assessments about its quality, reliability or trustworthiness.”

In summary, provenance is the ‘who’. It’s about finding the original author, editor and maintainer of data; and through that information Google can begin to make judgements about their data’s credibility.

Google has been very smart with their structuring of Freebase user accounts. To login to your account you are asked to sign in via Google; which of course gives the search giant access to your personal details, and may offer a source of data provenance from a user’s Google+ profile.

Freebase Topic pages also allow us to link a Freebase user profile through the “Users Who Say They Are This Person” property. This begins to add provenance to the inputted data and, depending on the source, could add further trust.

- External structured data

Recently an area of tremendous growth in material for SEOs has been structured data. Understanding the schema.org vocabulary has become a big part of our roles within search but there is still much that isn’t being experimented with.

Once Google crawls web pages with structured markup, it can easily extract and understand structured data based on the markup tags and add it to the Knowledge Graph.

No property has been more overlooked in the last few months than the sameAs relationship. Google has long used two-way verification to authenticate web properties, and even explicitly recommends using sameAs with Freebase within its documentation; so why wouldn’t I try and link my personal webpage (complete with person and location markup) to my Freebase profile? I used a simple itemprop to exhibit the relationship on my personal blog:

<link itemprop="sameAs" href="<a href="http://www.freebase.com/m/0py84hb" >http://www.freebase.com/m/0py84hb</a>">Andrew Isidoro</a>

Finally, my name is by no means common; according to howmanyofme.com there are just 2 people in the U.S. named Andrew Isidoro. What’s more, I am the only person with my name in the Freebase database, which massively reduces the amount of noise when looking for an entity related to a query for my name.

Data sources

Over the past few months, I have written many times about the Knowledge Graph and have had conversations with some fantastic people around how Google decides which queries to show information panels for.

Google uses a number of data sources and it seems that each panel template requires a number of separate data sources to initiate. However, I believe that it is less an information retrieval exercise and more of a verification of data.

Take my age panel example; this information is in the Freebase database yet in order to have the necessary trust in the result, Google must verify it against a secondary source. In their patent for the Knowledge Graph, they constantly make reference to multiple sources of panel data:

“Content including at least one content item obtained from a first resource and at least one second content item obtained from a second resource different than the first resource”

These resources could include any entity provided to Google’s crawlers as structured data, including code marked up with microformats, microdata or RDFa; all of which, when used to their full potential, are particularly good at making relationships between themselves and other resources.

The Knowledge Graph panels access several databases dynamically to identify content items, and it is important to understand that I have only been looking at initiating the Knowledge Graph for a person, not for any other type of panel template. As always, correlation ≠causation; however it does seem that Freebase is a major player in a number of trusted sources that Google uses to form Knowledge Graph panels.

Search behaviour

As for influencing what might appear in a knowledge panel, there are a lot of different potential sources that information might come from that go beyond just what we might think of when we think of knowledge bases.

Bill Slawski has written on what may affect data within panels; most notably that Google query and click logs are likely being used to see what people are interested in when they perform searches related to an entity. Google search results might also be used to unveil aspects and attributes that might be related to an entity as well.

For example, search for “David Beckham”, and scan through the titles and descriptions for the top 100 search results, and you may see certain terms and phrases appearing frequently. It’s probably not a coincidence that his salary is shown within the Knowledge Graph panel when “David Beckham Net Worth” is the top auto suggest result for his name.

Why now?

Dr Pete wrote a fantastic post a few weeks ago on “The Day the Knowledge Graph Exploded” which highlights what I am beginning to believe was a major turning point in the way Google displays data within panels.

However, where Dr Pete’s “gut feeling is that Google has bumped up the volume on the Knowledge Graph, letting KG entries appear more frequently,” I believe that there was a change in the way they determine the quality of their data. A reduction in affinity threshold needed to display information.

For example, not only did we see an increase in the number of panels displayed but we began to see a few errors in the data:

This error can be traced back to a rogue Freebase entry added in December 2012 (almost a year ago) that sat unnoticed until this “update” put it into the public domain. This suggests that some sort of editorial control was relaxed to allow this information to show, and that Freebase can be used as a single source of data.

For person-based panels, my inclusion seems to show a new era of Knowledge Graph that Dr Pete reported a few weeks ago. We can see that new “things” are being discovered as strings then, using data, free text extraction and natural language processing tools, Google is able to aggregate, clean, normalize and structure information from Freebase and the search index, with the appropriate schema and relational graphs, to create entities.

Despite the brash headline, this post is a single experiment and should not be treated as gospel. Instead, let’s use this as a chance to generate discussion around the changes to the Knowledge Graph, for us to start thinking about our own hypotheses and begin to test them. Please leave any thoughts or comments below.

Sign up for The Moz Top 10, a semimonthly mailer updating you on the top ten hottest pieces of SEO news, tips, and rad links uncovered by the Moz team. Think of it as your exclusive digest of stuff you don’t have time to hunt down but want to read!

Facebook Likes, Shares Don’t Impact Google Search Rankings [Study]

Eric Enge conducted two studies on the impact of Facebook activity on SEO, and found no clear-cut evidence that Google uses Likes or shares for discovery, indexing, or ranking. But Google can figure out who you’re friends with and can index posts.

Google ‘Let’s Go Caroling’ Easter Egg Turns Your Smartphone Into a Holiday Karaoke Machine

Google has created a festive Easter egg for smartphone users. On your mobile browser, simply do a Google search for [let’s go caroling] and a menu will pop up allowing you to select from “Jingle Bells”, “Deck the Halls”, and other holiday favorites.

The Shape of Things to Come: Google in 2014

Posted by gfiorelli1

We can’t imagine the future without first understanding the past.

In this post, I will present what I consider the most relevant events we experienced this year in search, and will try to paint a picture of things to come by answering this question: How will Google evolve now that it has acquired Wavii, Behav.io, PostRank, and Grapple, along with machine learning and neural computing technology?

The past

Last year, in my “preview” post The Cassandra Memorandum, besides presenting my predictions on what would have been the search marketing landscape during this 2013, I presented a funny prophecy from a friend of mine: the “Balrog Update,” an algorithm that, wrapped in fire, would have crawled the web, penalizing and incinerating sites which do not include the anchor text “click here” at least seven times and do not include a picture of a kitten asleep in a basket.

Thinking back, though, that hilarious preview wasn’t incorrect at all.

In the past three years, we’ve had all sorts of updates from Google: Panda, Penguin, Venice, Top-Heavy, EMD, (Not Provided) and Hummingbird (and that’s just its organic search facet).

Bing, Facebook, Twitter, and other inbound marketing outlets also had their share of meaningful updates.

For many SEOs (and not just for them), organic search especially has become a sort of Land of Mordor…

For this reason, and because I see so often in the Q&A, in tweets sent to me, or in requests for help popping up in my inbox, how many SEOs feel discouraged in their daily work by all these frenzied changes, before presenting my vision of what we need to expect in Search in 2014, I thought it was better to have our own war speech.

Somehow we need it:

(Clip from the “The Lord of the Rings: The Return of the King” by Peter Jackson, distributed by Warner Bros)

A day may come when the courage of SEOs fails. But it is not this day.

A methodology

“I give you the light of Eärendil … May it be a light for you in dark places,

when all other lights go out.”

Even if I’m interested in large-scale correlation tests like the Moz Search Engine Ranking Factors, in reality I am convinced that the science in which we best excel is that of hindsight.

For example, when Caffeine was introduced, almost no one imagined that that magnification of the SERPs would have meant its deterioration too.

Probably not even Google had calculated the side effects of that epochal infrastructural change, and only the obvious decline in the quality of the SERPs (who remembers this post by Rand) led to Panda, Penguin, and EMD.

But we understood just after they rolled out that Panda and Co. were needed consequences of Caffeine (and of spammers’ greed).

And despite my thinking that every technical marketer (as SEOs and social media marketers are) should devote part of their time to conducting experiments that test their theories, actually the best science we tend to apply is the science of inference.

AuthorRank is a good example of that. Give us a Patent, give us some new mark-up and new social-based user profiling, and we will create a new theory from scratch that may include some fundamentals but is not proven by the facts.

Hindsight and deduction, however, are not to blame. On the contrary; if done wisely, reading into the news (albeit avoiding paranoid theories) can help us perceive with some degree of accuracy what the future of our industry may be, and can prepare us for the changes that will come.

While we were distracted…

While we were distracted—first by the increasingly spammy nature of Google and, secondly, by the updates Google rolled out to fight those same spammy SERPs—Big G was silently working on its evolution.

Our (justified) obsession with the Google zoo made us underestimate what were actually the most relevant Google “updates:” the Knowledge Graph, Google Now, and MyAnswers.

The first—which has become a sort of new obsession for us SEOs—was telling us that Google didn’t need an explicit query for showing us relevant information, and even more importantly, that people could stay inside Google to find that information.

The second was a clear declaration of which field Google is focusing its complete interest on: mobile.

The third, MyAnswers, tells us that Personalization—or, better, über-personalization—is the present and future of Google.

MyAnswers, recently rolled out in the regional Googles, is a good example of just how much we were distracted. Tell me: How many of you still talk about SPYW? And how many of you know that its page now redirects to the MyAnswers one? Try it: www.google.com/insidesearch/features/plus/‎.

What about Hummingbird?

Yes, Hummingbird, the update no SEO noticed was rolled out.

Hummingbird, as I described in my latest post here on Moz, is an infrastructural update that essentially governs how Google understands a query, applying to all the existing “ranking factors” (sigh) that draw the SERPs.

From the very few things we know, it is based over the synonym dictionaries Google was already using, but applies a concept based analysis over them where entities (both named and search) and “word coupling” play a very important role.

But, still, Google is attending primary school and must learn a lot, for instance not confusing Spain with France when analyzing the word “tapas” (or Italy with the USA for “pizza”):

But we also know that Google has bought DNNresearch Inc. and its deep neural networks, which had gained great experience in machine learning with Panda, and that people like Andrew Ng moved from the Google X team to the Knowledge Team (the same of Amit Singhal and Matt Cutts), so it is quite probable that Google will be a very disciplined student and will learn very fast.

The missing pieces of the “future” puzzle

As with any other infrastructural change, Hummingbird will lead to visible changes. Some might already be here (the turmoil in the Local Search as described by David Mihm), but the most interesting ones are still to come.

Do you want to know what they are? Then watch and listen to what Oren Etzioni of Wavii (bought by Google last April 2013) says in this video:

As well described by Bill Slawski here:

The [open information] extraction approach identifies nouns and how they might be related to each other by the verbs that create a relationship between them, and rates the quality of those relationships. A “classifier� determines how trustworthy each relationship might be, and retains only the trustworthy relationships.

These terms within these relationships (each considered a “tuple�) are stored in an inverted index that can be used to respond to queries.

So, it can improve the usage of the immense Knowledge Base of Google, along with the predictive answers to queries based on context. Doesn’t all this remind you what we already see in the SERPs?

Moreover, do you see the connection with Hummingbird and how it can link together the Knowledge Graph, Google Now, and MyAnswers; and ultimately also determine how classic organic results (and ads) will be shown to the users over a pure entity-based and semantic analysis, where links will still play a role, but not be so overly determinant?

So, if I have to preview the news that will shake our industry in 2014, I would look to the path Wavii has shown us, but also especially to the solutions that Google finds for answering the questions Etzioni himself was presenting in the video above as the challenges Wavii still needed to solve.

But another acquisition may hide the key to those questions: the team from Behav.io.

I say team, because Google did not buy Behav.io as a society, but the entire team, which became part of the Google Now area.

What was the objective of Behav.io? It was looking at how peoples’ locations, networks of phone contacts, physical proximity, and movement throughout the day could help in predicting a range of behaviors.

More over, Behav.io was based over the smart analysis of the all the data the sensors in our smartphones could tell about us. Not only GPS data (have you ever looked at your Location History?), but also the speakers/microphones, the proximity detection between two or more sensors, which apps we use and which we download and discard, the lighting sensors, browser history (no matter which search engines we use), the accelerometer, SMS…

You can imagine how Google could use all this information: Again, for enhancing the predictive solution of any query that could matter to us. The repercussions of this technology will be obvious for Google Now, but also for MyAnswers, which substantially is very similar to Google Now in its purposes.

The ability to understand app usage could allow Google to create an interest graph for each one of us, which could enhance the “simple” personalization offered by our web history. For instance, I usually read the news directly from the official apps of the newspapers and magazines I like, not from Google News or a browser. I also read 70% of the posts I’m interested in from my Feedly app. All that information would normally not be accessible by Google, but now that it owns the Behav.io technology, it could access it.

But the Behav.io technology could also be very important for helping Google understand what the real social graph of every single person is. The social graph is not just the connection between profiles in Facebook or Twitter or Google Plus or any other social network, nor is it the sum of all the connections of every social network. The “real life social graph” (this definition is mine) is also composed of the relations between people that we don’t have in our circles/followers/fans, people we contact only by phone, short text messages or WhatsApp.

Finally, we should remember that back in 2011 Google acquired two other interesting startups: PostRank and Social Grapple. It is quite sure that Google has already used their technology, especially for Google Plus Analytics, but I have the feeling that it (or its evolution) will be used to analyze the quality of the connections we have in our own “real life social graph,” hence helping Google distinguish who our real influencers are, and therefore to personalize our searches in any facet (predictive or not predictive).

Image credit: Niemanlab.org

Another aspect that we probably will see introduced once and for all will be sentiment analysis as a pre-rendering phase of the SERPs (something that Google could easily do with the science behind its Prediction API). Sentiment Analysis is needed, not just because it could help distinguishing between documents that are appreciated by its users and those that are not. If we agree that semantic search is key in Hummingbird; if we agree that Semantic is not just about the triptych subject, verb, and object; and if we agree that natural language understanding is becoming essential for Google due to Voice Search, then sentiment analysis is needed in order to understand rhetoric figures (i.e. the use of metaphors and allegories) and emotional inflections of the voice (the ironic and sarcastic tones, for instance).

Maybe it is also for these reasons that Google is so interested in buying companies like Boston Dynamics? No, I am not thinking of Skynet; I am thinking of HAL 9000, which could be the ideal objective of Google in the years to come, even more so than the often-cited “Star Trek Computer.”

What about us?

Sincerely, I don’t think that our daily lives as SEOs and inbound marketers will radically change in 2014 from what they are now.

Websites will still need to be crawled, parsed, and indexed; hence technical SEO will still maintain a huge role in the future.

Maybe from a technical point of view, those ones who still have not embraced structured data will need to do so, even though structured data by itself is not enough to say that we are doing semantic SEO.

Updates like Panda and Penguin will still be rolled out, with Penguin possibly introduced as a layer in the Link Graph in order to automate it, as it happens now with Panda.

And Matt Cutts will still announce to us that some link network has been “retired.”

What I can predict with some sort of clarity—and for the simple reason that people and not search engines definitely are our targets—is that real audience analysis and cohort analysis, not just keywords and competitor research, will become even more important as SEO tasks.

But if we already were putting people at the center of our jobs—if we already were considering SEO as Search Experience Optimization—then we won’t change the they we work that much.

We will still create digital assets that will help our sites be considered useful by the users, and we will organize our jobs in synergy with social, content, and email marketing in order to earn the status of thought leaders in our niche, and in doing so will enter into the “real life social graph” of our audience members, hence being visible in their private SERPs.

The future I painted is telling us that is the route to follow. The only thing it is urging us to do better is integrate our internet marketing strategy with our “offline” marketing strategy, because that distinction makes no sense anymore for the users, nor does it make sense to our clients. Because marketing, not just analytics, is universal.

Sign up for The Moz Top 10, a semimonthly mailer updating you on the top ten hottest pieces of SEO news, tips, and rad links uncovered by the Moz team. Think of it as your exclusive digest of stuff you don’t have time to hunt down but want to read!

SearchCap: The Day In Search, December 18, 2013

Below is what happened in search today, as reported on Search Engine Land and from other places across the web. From Search Engine Land: Yandex Does What Google Can’t: Provides Bitcoin Exchange Rates How much is one US dollar in UK pounds? Google will give you a direct answer to that and many…

Please visit Search Engine Land for the full article.

Video Ad Views Have More Than Doubled Since Last Year

Americans watched 47.1 billion online content videos in November 2013, up from 40 billion in November 2012. Meanwhile, video ad views grew to 26.8 billion from 10.5 billion during the same period, according to comScore data.

What Are We Fighting For?

We are all fighting for something in our lives. Whether personally or professionally, it’s usually something we are very passionate about. Today, I would like to talk about the things that we fight for from a marketing standpoint, especially in SEO. As we all know, we’ve come a long way from hiding texts, blog commenting, […]

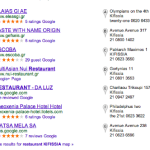

Google Local Results Are Taking Over The World

Many of my international clients have been living without Google+ Local results I guess because Google couldn’t license a reliable set of data to bounce its crawl info off of. A common discussion with international yellow pages clients is whether or not they should license their data to Google (IMO I think they should. Better […]

Many of my international clients have been living without Google+ Local results I guess because Google couldn’t license a reliable set of data to bounce its crawl info off of. A common discussion with international yellow pages clients is whether or not they should license their data to Google (IMO I think they should. Better […]

The post Google Local Results Are Taking Over The World appeared first on Local SEO Guide.

Yandex Does What Google Can’t: Provides Bitcoin Exchange Rates

How much is one US dollar in UK pounds? Google will give you a direct answer to that and many other currencies with the conversion tool that’s built into its search results. But when it comes to Bitcoin, Russian-based Yandex seems to have won the honor of being first to support conversion of…

Please visit Search Engine Land for the full article.

Google Launches Improved URL Removal Tool For Third-Party Content

Google has released an improved URL removal tool that the company says was specifically designed to improve the ability to remove third-party content from its search engine. John Mueller, a Google Webmaster Trends Analyst, announced the change on the Google Webmaster Central blog. Google’s…

Please visit Search Engine Land for the full article.

Google’s Matt Cutts On Search Spammers: “We Want To Break Their Spirits”

In episode number 227 of This Week in Google on the TWiT network, Google’s head of search spam Matt Cutts answered some questions from the hosts Leo Laporte and Jeff Jarvis. In one question, Matt explained that Google aims to “break the spirits” of spammers in order to encourage…

Please visit Search Engine Land for the full article.

Google Reveals Top Searches of 2013: From Nelson Mandela to Twerking

Google shares the moments that made up 2013 in its annual Zeitgeist video and interactive site that shows what mattered to people across the globe in the past year; on the top of the list was Nelson Mandela, actor Paul Walker and the iPhone 5s.

Google: We Don’t Control Content On The Web

I spotted a thread that is a common question in the Google Webmaster Help forums about removing content from showing up in Google. The response was even more interesting.

Google’s Eric Kuan…

Google Glass Won’t Replace Unit For Accidental Damage

Deb Lentz, a Google Glass Explorer, slipped on some ice and her Google Glass unit fell off and landed on the pavement. When they landed, the Glass arm snapped off and broke. The shocking part is that when she called Google to replace them…