If you are a web developer, digital marketer, or SEO specialist looking for up-to-date advice on search engine-friendly URLs, you are in the right place. This article examines the significance of search engine-friendly (SEF) URLs in enhancing a website’s ranking on Google. It offers practical advice on creating SEF URLs that boost website usability and enhance search result visibility.

Know that keywords in URLs are a tiny ranking factor and are user-friendly. Keep URLs as simple and user-friendly as you possibly can as longer URLs are truncated in search results listings.

Search engine-friendly URLs are user-friendly, relevant, and easy-to-understand page names on your website and are often visible in the browser address bar.

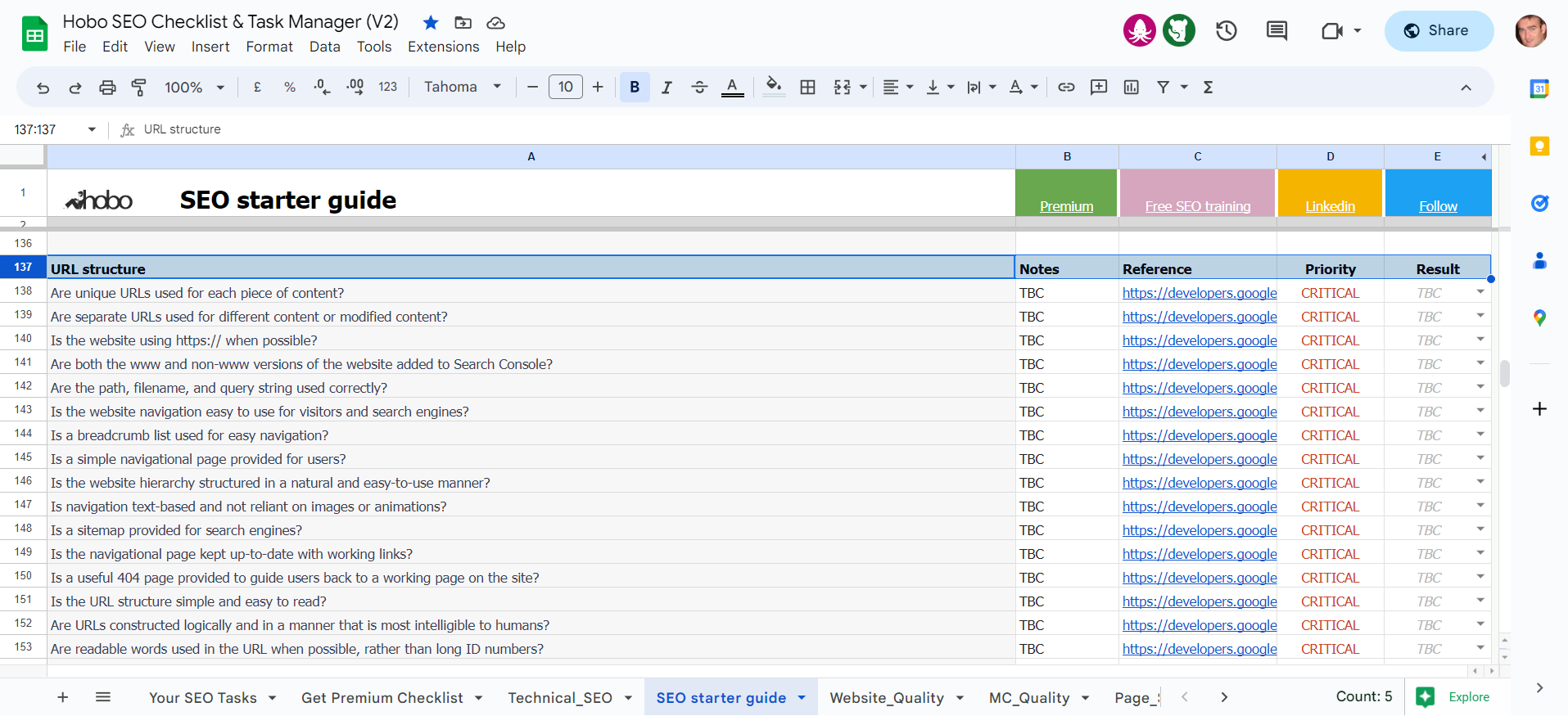

The SEO checklist is a spreadsheet that is available for you to access for free on Google Sheets, or alternatively, you can subscribe to Hobo SEO Tips and get your checklist as a Microsoft Excel spreadsheet.

If you are a web developer, digital marketer, or SEO specialist looking for up-to-date advice on search engine-friendly…source in the browser address bar.

The SEO checklist is a spreadsheet that is available for you to access for free on Google Sheets, or alternatively, you can subscribe to Hobo SEO Tips and get your checklist as a Microsoft Excel spreadsheet.

1.0 Introduction: Beyond Keywords – Why Your URL is a Promise to Your User

For over two decades, the conversation around URLs in SEO has been dominated by a single question: “Should I put keywords in my URL?”

While the answer has evolved, the question itself has become a distraction from a much more fundamental truth.

In my 25 years of experience in this field, I’ve seen that the most resilient and successful websites treat their URL structure not as a minor ranking tactic, but as a core component of their brand identity and a foundational element of user trust.

While the principles in this guide have long represented best practice, the landmark events of 2024 and 2025 have shifted them from the realm of educated advice into the category of confirmed, verifiable fact.

The Google Content Warehouse API leak of May 2024, coupled with the extensive technical disclosures from the U.S. vs. Google antitrust trial, has provided unprecedented, concrete proof of the direct impact of URL architecture on Google’s core ranking systems.

This guide has been updated to reflect this new evidence-based era of SEO, connecting long-standing principles to the specific, named systems and signals that are now known to govern search rankings.

A URL is one of the first pieces of information a user — and a search engine – sees about your page. It’s a promise.

A URL like https://example.com/womens-shoes/running/nike-air-zoom-pegasus-40 makes a clear promise about the content within. It’s predictable, descriptive, and helpful. Conversely, a URL like https://example.com/cat.php?id=89B12&session=3a9e1 is opaque, unmemorable, and erodes trust before the page even loads.

This guide moves beyond the dated debate about keywords. Instead, we will explore URL architecture through a modern, “People-First” lens. We will establish a set of guiding principles that treat your URLs as permanent, trustworthy assets that serve your users, reinforce your brand, and provide clear signals to search engines.

You will learn:

- The four essential pillars of a high-performance URL structure, now reinforced with evidence from Google’s own systems.

- A practical checklist for flawless technical implementation.

- How to handle advanced scenarios like e-commerce filters and international sites.

- Why a stable URL architecture is more critical than ever in an AI-powered search ecosystem.

Let’s build a URL structure that stands the test of time.

2.0 The Four Pillars of a High-Performance URL Structure

Instead of a sprawling checklist, a truly effective URL strategy can be built upon four guiding principles. These pillars ensure that every URL you create is logical, stable, unique, and, above all, helpful.

Pillar 1: Readability & Predictability (The Human Element)

A search-engine-friendly URL is, first and foremost, a human-friendly URL. It should be immediately understandable and readable. When a user sees your URL in a search result, in a social media post, or in their browser’s address bar, it should clearly communicate the page’s topic.

Best Practices:

- Use Simple, Descriptive Words: Opt for readable words over cryptic IDs or codes.

.../annual-report-2024.pdfis infinitely better than.../doc_id=78912. - Use Hyphens to Separate Words: This is Google’s long-standing, explicit recommendation. Hyphens (-) are treated as word separators, making URLs more readable. Avoid using underscores (_), which can connect words, or spaces, which are encoded as

%20and look messy.- Good:

/seo-content-writing/ - Bad:

/seo_content_writing/or/seo%20content%20writing/

- Good:

- Keep it Concise: While there’s no hard character limit, long URLs are often truncated in search results and are difficult for users to copy, paste, and share. Aim for brevity while maintaining descriptiveness.

Fueling NavBoost with Predictable URLs

The DOJ vs. Google trial unequivocally confirmed that user interaction data – clicks, dwell time, and user location – serves as a primary input for ranking web pages. This is largely accomplished through a system codenamed NavBoost, which analyses user click behaviour over a rolling 13-month window to refine search results.

The readability of your URL is the first step in generating a positive signal for this powerful system.

A clear, descriptive URL sets a precise expectation in the user’s mind before they click.

This “information scent” increases the probability that the click will be a “good click”—one where the user finds exactly what they expected and does not immediately bounce back to the search results page (a “bad click”). For example, a user seeing /annual-report-2024.pdf in the search results knows precisely what content to expect.

This clarity pre-qualifies the click, making it more likely to come from a user whose intent is perfectly aligned with the page content. When the page delivers on this promise, the user’s dwell time is longer, reinforcing to NavBoost that the result was satisfactory. Therefore, a readable URL is not merely a cosmetic feature; it is a direct, tangible tool for influencing one of Google’s most important ranking systems by improving click-through quality and post-click user satisfaction.

Pillar 2: Structural Cohesion (The Architectural Element)

Your URL structure should be a logical reflection of your website’s information architecture. A well-organised site uses directories (or folders) to group related content, and your URLs should mirror this hierarchy. This not only helps search engines understand the relationship between your pages but also allows users to navigate your site intuitively.

Best Practices:

- Create a Logical Directory Structure: Group content into logical subfolders. For an electronics retailer, a structure like

/laptops/apple/macbook-pro/is intuitive. It allows a user to “hack” the URL by deleting the last segment to navigate up to the parent category/laptops/apple/. This deliberate, “hand-crafted” architectural choice is a powerful quality signal. - Implement Breadcrumb Navigation: A breadcrumb trail (e.g., Home > Laptops > Apple > MacBook Pro) is the user-facing expression of your URL structure. It improves orientation and navigation, providing strong usability and SEO benefits. Use

BreadcrumbListschema to communicate this structure explicitly to search engines.

Explicitly Declaring Site Quality to Google’s Core System

The antitrust trial also revealed that Google’s ranking pipeline is a highly modular architecture where “hand-crafted” signals, deliberately engineered for transparency and control, form the backbone of the system. A central component of this architecture is a site-wide Quality Score, internally referred to as $Q$*. This score is a “largely static, query-independent” metric that functions as a domain-level authority signal, affecting the ranking potential of every page on a site.

A logical URL structure is a perfect example of a “hand-crafted” signal that feeds the systems responsible for calculating . A hierarchy like /laptops/apple/macbook-pro/ serves as a machine-readable blueprint of your site’s information architecture. It provides a clear, unambiguous signal of a well-organised, authoritative, and expertly curated resource. In a modular system that prizes clean inputs, a coherent URL hierarchy demonstrates a level of site quality that a flat or chaotic structure cannot. This explicit declaration of your site’s quality and organisation acts as a direct, positive input for the systems that calculate your site’s foundational score, thereby influencing the ranking potential of your entire domain.

Pillar 3: Stability & Permanence (The Trust Element)

Treat your URLs as permanent identifiers for your content. The DOJ trial confirmed the direct link between stable URLs, the flow of PageRank, and a site’s overall Quality Score (). Every time you change a URL, you break every inbound link pointing to it and reset its accumulated authority, at least temporarily. This is not just a temporary loss of “link juice”; it is a direct disruption of a primary input to the “largely static” site-wide score.

A stable URL structure is a signal of a trustworthy, well-maintained website. It forms a part of your “Canonical Source of Ground Truth”—the definitive, authoritative repository of facts about your brand and its content.

Changing URLs is like changing the street address of your business; it creates confusion and requires a significant effort to inform everyone of the new location. A 301 redirect is a necessary patch, but the ideal is to never create the wound in the first place.

Best Practices:

- Get it Right the First Time: Invest time in planning your URL structure during a site’s initial build or redesign.

- If You Must Change a URL, Use a 301 Redirect: A permanent (301) redirect is the correct technical mechanism to inform browsers and search engines that a page has moved permanently. This passes most of the link equity to the new URL and ensures users are not met with a 404 “Not Found” error.

Pillar 4: Canonicalisation & Uniqueness (The Technical Element)

Every unique piece of content on your site should have one—and only one—URL. When multiple URLs lead to the same or very similar content, it creates a duplicate content issue. This “splits the content’s reputation” (e.g., links, social shares) across multiple addresses, diluting their collective SEO value.

Common Causes of Duplicate URLs:

- Session IDs or Tracking Parameters:

.../page?sessionid=123 - www vs. non-www and HTTP vs. HTTPS: Search engines see these as four separate websites unless you standardise one version.

- Printer-Friendly Versions:

.../pageand.../page?print=true - Faceted Navigation Parameters:

.../shoes?colour=redand.../shoes?size=10

The Solution:

- Use the

rel="canonical"Link Element: The canonical tag is a piece of code in the<head>of your page that tells search engines which version of a URL is the “master” copy that you want to be indexed. For example, on the page.../shoes?colour=red, the canonical tag would point tohttps://example.com/shoes/. This consolidates all signals to your preferred URL.

Avoiding the clutterScore: URL Parameters and Site-Wide Penalties

The 2024 Content Warehouse leak provided the specific, punitive mechanism for why duplicate content from URL parameters is so damaging.

It is no longer just about splitting authority; it is about actively generating a negative, site-wide penalty signal. The leak revealed the existence of a site-level attribute named clutterScore, designed to penalise sites with a large number of “distracting/annoying resources“.

If you display ads, for instance, interruptive faceted navigation that generates thousands of low-value, near-duplicate pages is the perfect recipe for a high clutterScore.

Critically, the system can also “smear” this signal, identified by the attribute isSmearedSignal.

This allows Google to extrapolate a penalty from a sample of bad URLs to a larger cluster of similar pages.

This means Google doesn’t need to crawl all of your junk pages; it only needs to find a representative pattern (/category?*) to penalise the entire set.

A high clutterScore acts as a direct, negative input into the foundational siteAuthority or score.

This makes canonicalisation a critical defensive SEO task to prevent a direct, algorithmic demotion that can suppress the entire domain.

The table below summarises the newly confirmed risks associated with poor URL architecture.

3.0 Technical Implementation: A Practical Checklist for Developers & SEOs

With the four pillars as our strategic foundation, here is a practical checklist for technical implementation. Each point is accompanied by a “Why it Matters” explanation that connects the technical detail back to our core principles.

- Use HTTPS: Secure your site with an SSL certificate.

https://is a confirmed, lightweight ranking signal, but more importantly, it’s a critical trust signal for users.- Why it Matters: Directly supports Pillar 3 (Trust). Browsers now flag non-HTTPS sites as “Not Secure,” which actively erodes user confidence.

- Standardise Your Domain: Choose one canonical version of your domain (e.g.,

https://www.example.com) and use 301 redirects to forward all other versions (http://, non-www, etc.) to it. Register all versions in Google Search Console.- Why it Matters: This is the foundation of Pillar 4 (Canonicalisation), preventing site-wide duplication.

- Avoid Non-ASCII Characters: While modern browsers and search engines can often handle them, it’s safest to stick to standard ASCII characters in your URLs to ensure maximum compatibility across all platforms. Use UTF-8 encoding where necessary.

- Why it Matters: Supports Pillar 1 (Readability) and Pillar 3 (Stability) by preventing encoding issues that can break links.

- Manage URL Parameters Carefully: For URLs with parameters that don’t substantially change page content (like tracking or sorting parameters), use the

rel="canonical"tag to point to the clean version of the URL.- Why it Matters: A critical tactic for Pillar 4 (Uniqueness), especially for e-commerce and large, dynamic websites, and essential for avoiding a high

clutterScore.

- Why it Matters: A critical tactic for Pillar 4 (Uniqueness), especially for e-commerce and large, dynamic websites, and essential for avoiding a high

- Create a Useful 404 Page: When a user inevitably lands on a broken link, don’t just show them an error. A good 404 page should acknowledge the error, reflect your brand’s personality, and provide helpful navigation back to your homepage, a search bar, or popular content.

- Why it Matters: A thoughtful 404 page is a key aspect of user experience and supports Pillar 3 (Trust) by showing that you care about the user’s journey even when something goes wrong.

4.0 Advanced Scenarios & Edge Cases

While the four pillars apply universally, certain types of websites face unique challenges. Here’s how to apply these principles in more complex scenarios.

4.1 E-commerce & Faceted Navigation

Faceted navigation (e.g., filtering products by colour, size, brand) is essential for user experience but can be a technical SEO nightmare. It can generate millions of URL combinations (?colour=red&size=10&brand=nike), creating a massive duplicate content and crawl budget problem that directly risks a high clutterScore.

The Strategy:

- Define a Canonical URL: The unfiltered category page (e.g.,

/womens-shoes/) should always be the canonical version. - Use

rel="canonical": Apply a canonical tag on all filtered variations pointing back to the main category page. - Selective Indexing: For some highly valuable filter combinations (e.g.,

/womens-shoes/nike/), you may decide you want that page indexed. In this case, you would write a unique title tag, meta description, and H1 for that page and make it self-referencing with its canonical tag. Use this approach sparingly for only your most important product combinations.

4.2 International & Multilingual Sites

When targeting multiple countries or languages, your URL structure is the primary mechanism for communicating this to search engines and users. You have three main options:

- Country-Code Top-Level Domains (ccTLDs): (e.g.,

example.de,example.fr) This is the strongest signal for geotargeting but is the most expensive and complex to manage. - Subdirectories: (e.g.,

example.com/de/,example.com/fr/) This is the most common and recommended approach for most businesses. It’s easy to set up and consolidates domain authority to a single root domain. - Subdomains: (e.g.,

de.example.com,fr.example.com) A valid option, but it can sometimes be treated as a separate entity by search engines, potentially segmenting your authority.

Recommendation: For most businesses, the subdirectory approach (/de/, /fr/) offers the best balance of strong geotargeting signals and ease of management.

4.3 Migrations & Redesigns

The single most critical task during a website migration or redesign is preserving link equity through a meticulous URL mapping and redirection plan.

Mini-Guide:

- Crawl Your Old Site: Before you do anything, get a complete list of all URLs on your existing site.

- Map Old URLs to New URLs: In a spreadsheet, create a one-to-one map for every old URL to its corresponding new URL.

- Implement 301 Redirects: Work with your developers to implement permanent 301 redirects for every URL in your map.

- Update Internal Links: Ensure all internal links on the new site use the new URL structure.

- Monitor 404 Errors: After launch, use Google Search Console to closely monitor for any spike in 404 errors and fix them immediately.

5.0 The Future: URLs in an AI-Powered Search Ecosystem

The rise of generative AI in search and large language models (LLMs) is changing how information is surfaced. While the visual prominence of a URL might diminish in some conversational interfaces, the importance of a stable, descriptive, and logical URL architecture is actually increasing.

Here’s why:

- URLs as Disambiguation Factoids: An AI’s understanding of the world is built on the data it’s trained on. The trial’s confirmation of Google’s reliance on “hand-crafted” systems validates the strategy of using URLs as permanent, descriptive factoids for machine consumption. A clear URL like

/about/ceo/jane-doeis a powerful, structured “Disambiguation Factoid” that helps an AI confidently connect a piece of content to a specific entity (Jane Doe, the CEO). It provides context that an opaque URL lacks, helping to prevent AI “hallucinations” and ensuring your content is correctly represented in the emerging “Synthetic Content Data Layer“. - URLs as a Canonical Source: In a world of synthetic and re-packaged information, having a stable, permanent URL for your original content is more critical than ever. Your website, and its permanent URLs, act as the Canonical Source of Ground Truth. It’s the verifiable origin point that both users and AI systems can trust. When an AI cites its sources, a clean, trustworthy URL is a far stronger signal than a temporary or parameterised one.

- Human Trust Remains Paramount: Regardless of the interface, humans will always be part of the equation. A user who is presented with a source link from an AI chatbot is more likely to click on and trust a URL that is readable and comes from a recognisable domain. The principles of human-computer interaction don’t disappear; they become even more important as a foundation of trust.

Your URL structure is no longer just for crawlers and SERPs. It is a fundamental part of how you manage your brand’s identity in an increasingly automated information landscape.

6.0 Conclusion: Your URL is Your Legacy

We have moved far beyond the simple question of keywords. A modern, effective URL architecture is a strategic asset that operates at the intersection of user experience, technical SEO, and brand identity. The recent disclosures from within Google have elevated these concepts from best practices to core strategic imperatives.

By building your site on the four pillars – Readability, Cohesion, Stability, and Uniqueness – you create a structure that is resilient, trustworthy, and helpful.

You send clear, “hand-crafted” signals to both your human audience and the search engines that your website is a well-organised, authoritative resource. These signals are now known to directly influence foundational ranking systems like NavBoost and the calculation of your site’s overall Quality Score ().

In the end, every URL you publish becomes a permanent part of the web. It’s a small piece of your brand’s legacy. By treating it with the strategic importance it deserves, you build a stronger foundation for all of your digital marketing efforts, today and into the AI-powered future.

Keywords? Yes, put your main keywords in there. Be hyper relevant. Keep the URL name as short as possible.

Comments are closed.