There’s a conversation happening in boardrooms, courtrooms, and congressional hearings right now that every AI company is trying very hard to avoid having in public: where does your training data come from, and do you have the right to use it?

Many AI companies answer this question with lawyers.

I have decided to answer it with architecture.

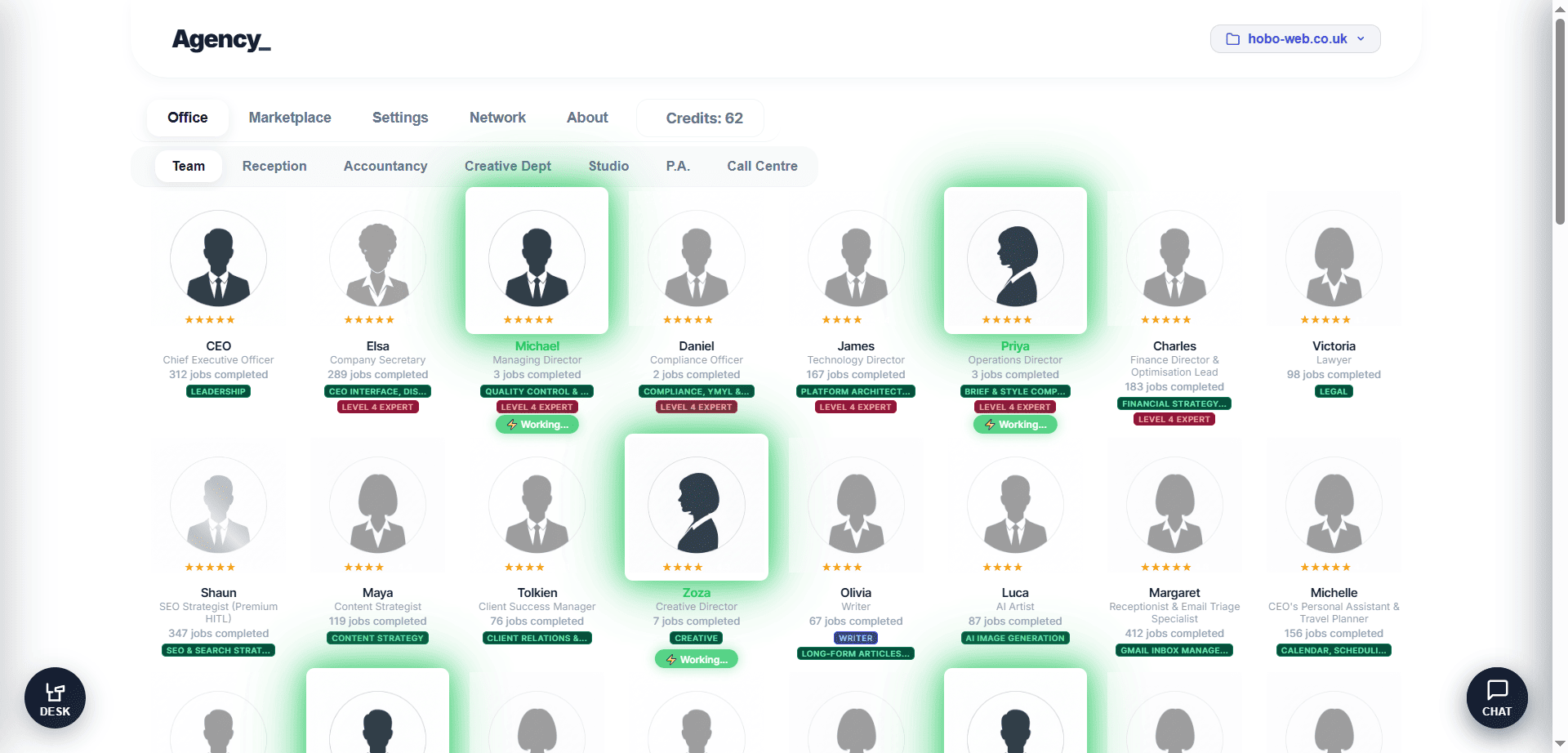

Agency is an 81-employee AI orchestration platform for HITL Marketing – an Agentic Operating System for the new era.

Agency researches, writes, audits, fact-checks, and publishes content at scale, with a HITL loop layer. In fact, Agency provided the draft for this article.

The aim of the game is augmentation, not replacement, for the HITL.

Agency touches the open web.

It reads public pages. It extracts information.

And because it does that, Agency has the responsibility to do it correctly – not just ethically, but structurally, at the code level, in ways that are public, auditable, provable, and defensible.

This week, before launch, I shipped two changes that represent exactly how seriously I take this.

These changes took the framework I use back a couple of steps, in terms of architecture, but these are lines real people seem to want.

Anecdotally, I know this as a real person.

Feature 1: Agency is Not Lying About Who It Is

Every web scraper has a User-Agent string – a piece of text it sends to websites saying, “Hello, this is who I am.” Most scrapers lie.

They send a string that pretends to be Google Chrome on a Windows desktop, because websites are less likely to block what they think is a real human visitor.

We were doing this too. It’s useful if you have permissions to do that from the website owner (like an SEO audit).

But its unethical if what you are doing is rewriting other folks’ work.

Our custom research engine – the system that discovers and analyses content so Agency writers can fact-check statistically optimised drafts – was sending a spoofed Chrome User-Agent to Yahoo Search for URL discovery.

As a hacker of sorts, it was perfectly natural for me to just hack my way to a great first product.

But the thought of putting such a thing in other folks’ hands, for a fee, has given me pause for thought.

As of this week, it sends this instead:

Agency/1.0 (+https://thisisagency.ai; bot@thisisagency.ai)That’s its real name.

The real website.

The real contact email.

Every web server on the planet can see exactly who is knocking on its door.

Does this mean some websites will block us? Yes. Yahoo Search, to give you an example, almost certainly will. I built a fallback for everything – Gemini search grounding, which queries Google’s own API with legitimate API keys. The URL discovery still works. I just won’t lie to get it.

Why does this matter legally? The landmark hiQ Labs v. LinkedIn ruling – the most important legal precedent for web scraping in the United States – turned on the distinction between accessing public data as yourself versus circumventing access controls while pretending to be someone else. hiQ won because they accessed public information openly, without pretending to be human users.

“Ultimately, the Ninth Circuit’s affirmation of the district court’s grant of the preliminary injunction prohibited LinkedIn from denying hiQ access to publicly available data on public LinkedIn users’ profiles.” Wikipedia, 2026

The moment you spoof a User-Agent, you are impersonating a browser to bypass bot detection.

That is exactly the behaviour that shifts a court’s sympathy against you.

We removed it from research practices. Completely.

Not behind a feature flag.

Not configurable.

Gone from the codebase.

I had completely cracked these citations in the beta product of Agency, so I know the quality I can achieve, but I wasnt happy with one little part of the code that didn’t meet these requirments on this page – so I deleted it, thinking – I will come back to this little part and come up with another, better solution that is as equally good but doesnt steal other peoples content.

Currently, this change has introduced some less impressive linking practices into the system, which I need to iron out before launch. But I’m happier knowing I’m on the right side of this.

I figure it is best to do it correctly from the very get-go.

Ironically, Agency only visits websites to link to them (checking a fact). So I need to see how that actually fits in with laws, etc, because it is actually a benefit to the website publishing the fact.

Thats also why Agency has Google’s Helpful Content guidelines baked in. Agency (and also the team at Searchable.com, with whom I advised on their launch in December 2025) is aiming for the highest level of quality. There are lower benchmarks I could have chosen for both these sites to aim for.

This is why E-E-A-T is important as a concept (in the way Maslow’s hierarchy of needs is to self-actualisation). It’s not technically relevant to do technical seo though.

It’s what can separate SEO from spam, and even illegality, with the caveat that you, dear reader, know I am an SEO (search engine optimiser). I am not a lawyer.

Feature 2: We Respect robots.txt – Before We Ever Touch a Page

robots.txt is the web’s oldest social contract. Since 1994, it has been the standard mechanism for website owners to say: “Automated agents, you are not welcome to access this part of my site.”

Most AI companies ignore it.

Some ignore it quietly. Others ignore it loudly, arguing that robots.txt is a “suggestion” with no legal weight.

We disagree.

And as of this week, Agency enforces robots.txt compliance at the code level.

We built a dedicated robots-checker module that:

- Fetches and parses robots.txt for every domain before any scraping attempt

- Caches results per domain to avoid hammering the same site twice

- Checks our specific User-Agent against Disallow and Allow rules, with wildcard fallback

- Fails open — if a site doesn’t have a robots.txt, we assume no restrictions (standard practice)

- Blocks before contact — the check runs before any HTTP request to the page itself, not after

If a website says “bots, stay out,” we stay out. Every time.

No exceptions. No overrides.

No, “but the content was really good.”

Here is the actual code path:

scrapePage(url)

→ isScrapingAllowed(url) // Check robots.txt first

→ fetchRobotsTxt(domain) // Fetch and cache rules

→ match against Disallow/Allow rules

→ if blocked: log " Respecting robots.txt" → return null

→ if allowed: proceed with axios/Puppeteer scrapingA page that is disallowed by robots.txt will never be fetched, never be parsed, never be read by our AI agents, unless this is through API (the legal route).

The connection is never made.

Why does this matter? Because the growing body of AI copyright litigation – The New York Times v. OpenAI, Getty Images v. Stability AI, Doe v. GitHub – consistently examines whether the defendant made a good-faith effort to respect content owners’ expressed wishes.

Ignoring robots.txt is Exhibit A in a bad-faith argument.

Respecting it is evidence of diligence.

So again, now this is baked in.

And naturally, I will be doing a deep dive on these copyright laws in future to ensure this is the case with Thisisagency.ai (formerly Codename: Agency).

The Deeper Architecture: Why Agency Is Different

These two changes aren’t isolated fixes.

They sit within a broader legal defence architecture that is woven into the fabric of how Agency processes information. I have written about the actual power of Agency and agentic software previously. Agentic software is on the verge of becoming the new paradigm.

We Don’t Copy – We Transform

When Agency produces an article, it doesn’t paraphrase a source.

It runs a multi-agent pipeline:

- Robert (Deep Researcher) discovers and researches the top-ranking pages for a query

- Our protected IP extracts only structural intelligence from crawled pages. Not paragraphs. Not sentences. Statistical blueprints.

- An AI Junior Writer produces an initial draft from the structural blueprint

- An AI Senior Writer adds fresh data from recent news

- Scarlett (AI Editorial Manager) reviews for quality

- Zoza (AI Creative Director) applies the final creative direction

- Priya (AI Director) validates compliance

- Michael (AI Managing Director) scores it.

- David or Daniel gives final approval as AI compliance monitors, which is then passed to the HITL (Human-in-the-loop) for approval.

Every step produces a separate, time-stamped case note in the filing cabinet. The entire history of the transformation is preserved, viewable, and auditable.

If someone claims our output is derivative of their copyrighted article, we can produce the full execution graph showing exactly how many independent agents, each with their own proprietary rubric, transformed raw structural data research into a completely original piece of work.

That’s not a legal theory.

That’s an engineering fact, provable from our database.

FYI, I think I see Google doing this sort of deconstruction, too, improving AI Overviews, for instance.

We Extract Facts, Not Expression

Copyright protects expression, not facts. You can copyright the way you describe the population of London. You cannot copyright the number itself.

Agency’s bespoke system is designed around this distinction. When it analyses a page, it immediately strips all styling, formatting, navigation, and advertisements. What remains is:

- Title — a fact (what the page is called).

- Headings — structural facts (what topics are covered).

- Body text — capped at 3,000 characters, used only to extract statistical patterns.

- Word count — a number.

This raw material is then passed through an LLM that extracts patterns across multiple sources: which terms appear on all top-ranking pages, which subtopics are covered by two or more sources, and which questions are answered across the corpus.

The output is a statistical blueprint – a set of uncopyrightable facts about what comprehensive coverage of a topic looks like.

The original expression – the author’s unique phrasing, their specific metaphors, their structural choices – is neither stored nor reproduced.

We Don’t Store the Raw Material

The collected HTML exists only in memory during the analysis pipeline. It is not written to a database. It is not persisted to a file system. When the function completes, the JavaScript garbage collector reclaims it.

What we do store is the extracted structural intelligence (terms, pairs, concept mapsetc) and the final, transformative output.

If a media company subpoenas our servers, they will find the proprietary analytical framework and the original articles our agents produced.

They will not find a cache of their copyrighted web pages.

The Uncomfortable Truth

Let’s be direct: you cannot build an AI content platform that is 100% immune to legal challenge. The law is still catching up to the technology.

The NYT v. OpenAI case has not been fully adjudicated.

The EU AI Act is being interpreted in real time.

The UK’s copyright framework is under active parliamentary review.

But you can build one that is defensible.

You can make every architectural decision with the assumption that a well-funded legal team will one day examine your code, your logs, and your data retention policies.

You can ensure that when they do, what they find is:

- A bot that honestly identifies itself.

- A system that respects robots.txt before making contact.

- A pipeline that extracts uncopyrightable facts, not copyrightable expression.

- A multi-agent transformation chain that is provably, auditably, structurally original.

- A data retention policy that doesn’t hoard scraped content.

- A human-in-the-loop architecture that puts a real person between AI output and publication.

That is what I built.

Not because a lawyer told me to.

Because it’s the right engineering decision for a system that intends to exist for a very long time.

Because I am a creator too.

What Comes Next

We have three further hardening measures on our roadmap:

- Transformation scoring — a machine-calculated similarity metric comparing scraped source content against the final article, stored with every creative. Automated proof that the output is structurally dissimilar to any single source.

- Selective data retention — automatic purging of intermediate case notes (drafts, research data) after 90 days. The execution trail and final creatives are preserved permanently as transformation proof. Everything else expires.

- Style mimicry filtering — a guard in our ethics pre-flight gate (Meg) that rejects user requests to “write in the style of [specific publication].” If you ask Agency to write like the New York Times, for instance, it will decline. If you ask it to write a professional, objective analysis, it will deliver.

These aren’t marketing features.

They’re not competitive differentiators.

They’re the cost of taking this seriously.

The Agentic Era has begun in earnest.

The address is thisisagency.ai.

Elsa will be expecting you.

Meet Elsa, Agency Company Secretary.

I will be laying out the manifesto of Agency on the Hobo SEO blog before Agency takes over the running of both sites in the spring.

Disclaimer: Agency is a simulation. AI is a simulation! Some people think you are a simulation. Not many people know this aspect of AI. AI cannot do a lot of things people say it can do. For transparency, I need to say it is a simulation. For instance, I have an accountant baked into Agency. I am not an accountant, though, and neither is AI. This data should be reviewed BY YOUR REAL ACCOUNTANT, with the point being you have saved a lot of time collecting, sorting and reviewing the data before the real accountant reviews it. That is the essence of Agency. It is the essence of AI HITL Marketing. AI does the grunt work, the CEO signs the job off, and publishes under their credentials. This article was created using Grok, CLaude and Gemini to give a thoughtful, transparent overview of what Agency, and Agentic systems like it represent over the coming year. For me, at this point, the cyborg apparatus I predicted in my AI SEO ebook last year is now effectively built, and it is just a job of making it slicker and ensuring it is wielded correctly and responsibly by the user.

Update: A similar framework to Agency has been described in a recent paper from Google.

Agency is in a closed beta and is built, solo, by Shaun Anderson at thisisagency.ai. I used the Marketing Cyborg Technique and logical creative inference optimisation techniques to get here. If you have questions about my approach to ethical AI, legal compliance, or anything discussed in this post, contact me directly, in public or private.